Battle of Tokens: For Five Consecutive Weeks, How Has China's AI Outperformed America's AI?

![]() 04/08 2026

04/08 2026

![]() 345

345

©New Game

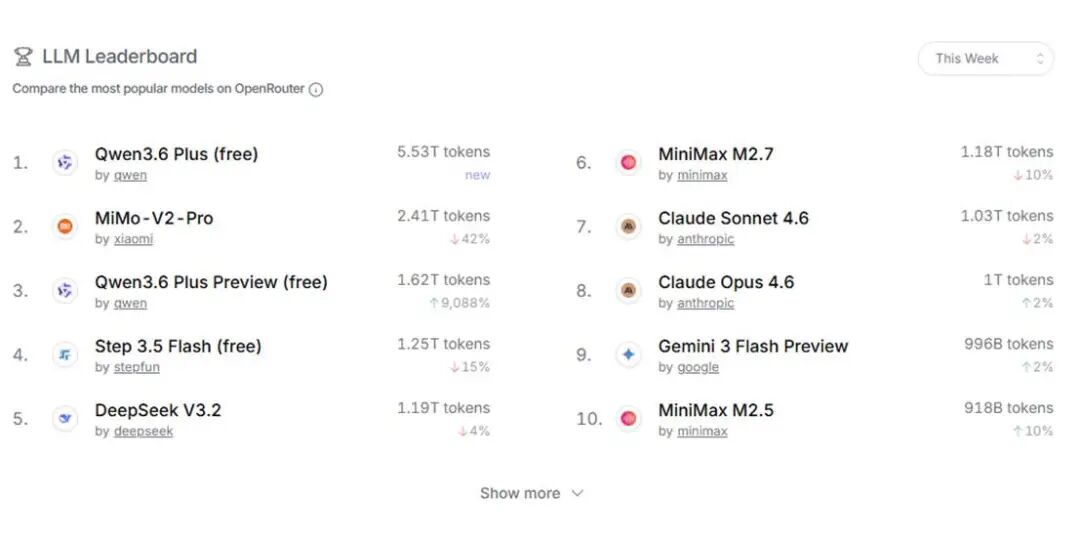

According to the latest data from OpenRouter, a globally renowned large model API call platform, from March 30 to April 5, China's AI large models achieved a weekly call volume of 12.96 trillion Tokens, surging by 31.48% month-on-month and surpassing the United States for five consecutive weeks. During the same period, the U.S. call volume was only 3.03 trillion Tokens, less than a quarter of China's.

What does this number signify? On average, nearly 2 trillion Tokens of 'intelligence' flow from Chinese models to global developers every day, equivalent to 'processing' several times the entire content of Wikipedia daily.

Image source: OpenRouter official website

The top six models in terms of call volume are all from China—Alibaba's Qwen (ranking first and third), Xiaomi's MiMo, StepFun, DeepSeek, and MiniMax. Interestingly, the 'main force' behind these numbers is not Chinese developers: U.S. users account for 47% on the OpenRouter platform, while Chinese users make up only 6%. Programmers in Silicon Valley, startups in Europe, and business owners in Southeast Asia are voting for Chinese AI with real money.

This 'comeback' has been astonishingly swift. Its underlying logic is not merely technological superiority or price war advantages. So, what is the real reason?

The Electricity Bill: A Trump Card

Let's start with a fact that keeps Silicon Valley awake at night: training and running AI models is becoming one of the most power-hungry endeavors of the 21st century.

In a March blog post, NVIDIA founder and CEO Jensen Huang broke down the AI industry into five layers: energy, chips, infrastructure, models, and applications. He emphasized, 'Energy is the first principle of AI and the fundamental constraint on how much intelligence the system can generate.'

In simpler terms: if you want AI to get smarter, first ask if the power grid can handle it.

And the U.S. power grid is struggling to keep up.

The exponential growth of AI computing power is severely straining the U.S. power system. In early 2026, Microsoft was forced to build its own gas turbines due to delays in grid access for its data center in West Virginia, while Google signed lucrative nuclear power contracts to 'feed' its AI.

However, not all costs can be absorbed by corporations. In several Midwestern U.S. states, this competition has begun driving up electricity prices for households, with some utility companies applying for new rate hikes. The cost of the AI race is shifting from tech giants' financial reports to American families' bills.

Meanwhile, the situation in China is almost a different story.

According to data released by China's National Energy Administration in January 2026, China's total electricity consumption in 2025 reached approximately 10.37 trillion kWh, more than double that of the United States and higher than the combined total of the EU, Russia, India, and Japan.

Behind this figure lies the world's largest power infrastructure and competitive electricity prices. In western China, the cost of green electricity (wind and solar) can be as low as around 0.2 yuan per kWh, with overall industrial electricity costs lower than in most regions of Europe and the United States.

More crucial than electricity prices themselves is the structural advantage brought by China's 'East Data, West Computing' initiative. By building data centers directly in western regions rich in green energy, cheap clean power is converted into computing power on-site and then transmitted via fiber optics nationwide and even globally.

This model achieves a commercial closed loop where 'electricity stays within borders, but value crosses them.' The wind in the west lights up screens in the east and supports AI applications for developers worldwide.

This 'electricity cost advantage' is directly reflected in API pricing.

Take the OpenRouter platform as an example: the input price for the U.S. model Claude Opus 4.6 is $5 per million Tokens, with output prices as high as $25 per million Tokens. In contrast, China's MiniMax M2.5 costs just $0.3 per million Tokens for input and less than $2.5 per million Tokens for output.

For coding tasks, using a Chinese model costs only one-tenth of its U.S. competitor.

A Silicon Valley developer calculated on social media that his team calls about 5 billion Tokens monthly. Using U.S. models would result in a monthly bill of around $2,500, while switching to Chinese models reduces this to just over $200.

This is the underlying logic of the call volume data. It's not that Chinese AI has suddenly become 'smarter' than U.S. AI—it's that Chinese AI has suddenly become an order of magnitude cheaper.

When something is an order of magnitude cheaper, 'good enough' beats 'best.' This isn't nationalist sentiment; it's commercial rationality. Global developers are voting with their feet.

Calculating Smarter, Training Harder

The problem with traditional large models is that they activate all parameters for every inference, regardless of whether the question is simple or complex. It's like calling everyone to a meeting, no matter the topic—inefficiency is inevitable.

Chinese mainstream large models, including DeepSeek, widely adopt MoE (Mixture of Experts) architecture, akin to installing an access control system for a massive 'company': it only lets the most relevant 'experts' handle problems while keeping others on standby.

Image source: Qwen official website

Image source: Qwen official website

This 'on-demand activation' model allows models to maintain vast knowledge reserves while compressing actual computation to a fraction of the original.

Take Alibaba's Qwen as an example: its MoE architecture significantly reduces inference costs, which is why Qwen dares to offer a 'free version' while maintaining commercial sustainability.

But that's not all. China's AI has another trump card in manufacturing workshops.

If Silicon Valley labs and Wall Street trading floors are the 'training grounds' for U.S. AI, then China's AI 'training ground' is the world's most complete industrial supply chain.

From precision electronics manufacturing to heavy machinery, from supply chain management to quality inspection, every link (link) breeds real, urgent, and high-value AI implementation needs. These needs are not simulated scenarios in labs but hard metrics for corporate survival.

When AI technology is deployed in these real-world environments, it must confront challenges like data noise, extreme operating conditions, and cost control. This harsh 'combat' actually forces rapid technological iteration.

More importantly, the massive, multidimensional, and high-quality data generated in industrial scenarios serves as valuable fuel for training and optimizing AI models.

In March 2026, DAMO Academy released a RISC-V architecture CPU named XuanTie C950, which for the first time ran the full-scale DeepSeek V3 671B model inference on a single CPU, achieving 80 tokens per second for Qwen3 30B.

This marks RISC-V's first true entry into the 'elite circle' of top-tier AI computing power. The XuanTie story reveals a deeper trend: as AI inference demand explodes and physical AI (embodied intelligence, robots) becomes a new battleground, customized, low-power, and scalable chip architectures are gaining historic opportunities.

This systemic capability—'from energy to chips to models to applications'—ultimately crystallizes into the ultimate cost-effectiveness of Tokens. It's not a breakthrough in a single technology but a victory of systems engineering.

Have We Won? Not Yet

Do pretty numbers mean victory? Several unignorable facts must be clarified first.

First, leading in call volume does not equate to comprehensive technological superiority.

Image source: European electronics industry media 'eeNews Europe' official website

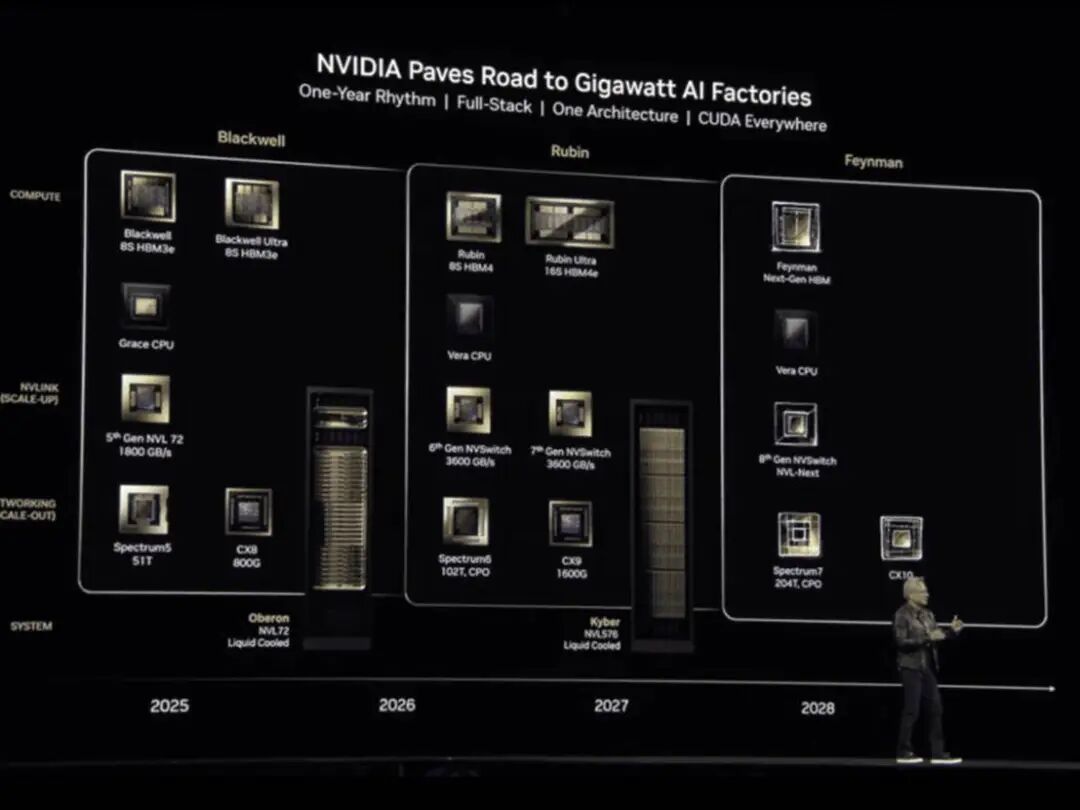

In core chip technology, NVIDIA's latest Feynman architecture GPU (expected in 2028) will exceed 5 kilowatts in power consumption per chip while maintaining a leading performance edge. In foundational algorithm innovation, the United States still holds an original advantage. Call volume reflects market demand and commercialization capabilities, not the entirety of basic research.

Second, price wars can win temporary gains but not lasting success.

Analyses by Bloomberg and The Wall Street Journal point out that a significant portion of overseas users of Chinese AI models are attracted by 'low prices.'

This means that once U.S. models reduce costs through more advanced chips or efficient architectures, or if China's electricity cost advantage weakens, these users may quickly return.

Third, AI safety and governance are emerging as new competitive dimensions.

The EU's AI Act has taken effect, and U.S. states are accelerating legislation. Chinese AI going global must not only face technological competition but also navigate an increasingly complex regulatory environment.

The Act requires 'high-risk' AI systems to undergo compliance assessments before and after deployment, including reviews of non-discrimination and fundamental rights impacts, with necessary transparency and human oversight mechanisms.

Undeniably, this power shift triggered by 'electricity bills' is rewriting the rules of the global AI industry.

Tokens are becoming the new 'commodities' of the digital age. Just as oil defined the industrial era, computing power defines the intelligent era. And China, with its triple advantages in energy costs, infrastructure capabilities, and manufacturing ecosystems, is becoming the 'world factory' for Tokens.

Notably, this may be China's first time securing an early advantage in the infrastructure layer of an information technology revolution.

In the PC era, China was Intel and Microsoft's 'assembly workshop'; in the mobile internet era, it was iOS and Android's 'app factory.' But in the AI era, from energy to chips to models to applications, China is forming a relatively complete closed loop.

Now, the initial fruits of this loop are visible.

OpenRouter data shows that Chinese models are not only leading in call volume but also becoming increasingly 'globalized' in user structure. U.S. developers use Chinese models for coding, European startups for product development, and Southeast Asian businesses for cost reduction and efficiency gains. This is unprecedented global influence for Chinese AI.

The race isn't over. Bottlenecks in high-end chips, shortcomings in brand recognition, and pressure on industry profits remain challenges to address. But one thing is certain: in the 'heavy industrialization' wave of AI, energy is the ultimate hard constraint.

And China holds the hardest card. The rest depends on how it's played.