AI-Oriented SSDs Go Viral

![]() 04/10 2026

04/10 2026

![]() 482

482

With GPU computing power doubling each quarter and HBM becoming the "hard currency" of AI servers, a severely underestimated core component—AI workload-optimized SSDs—stands at the center of industry contradictions. Current mainstream storage solutions, HDDs and HBM, each face insurmountable developmental bottlenecks, which are key contributors to this situation.

01

HBM and HDD: Neither is the Optimal Solution

First, consider HBM. As GPU computing power explodes, it represents an exponential increase in "data processing capability." From single cards to clusters, and from models with tens of billions to trillions of parameters, GPUs' "data throughput demands" have become increasingly stringent: requiring not only speed but also stability and zero latency to avoid "idle computing power." This demand precisely exposes the pain points of existing storage solutions. Second, HBM's status as "hard currency" reflects a market's passive choice for "high-bandwidth storage." Its core advantage lies in "near-VRAM-level bandwidth," which maximizes alignment with GPUs' high-speed computing rhythms and minimizes data transfer delays—key to its standardization in AI servers. However, HBM's cost structure contradicts "large-scale deployment," as excessive reliance on HBM directly drives up overall AI server costs, deterring most enterprises.

Now, examine HDDs, another mainstream storage solution. As a long-dominant "capacity champion" in the storage market, HDDs excel in low-cost, high-capacity scenarios like data archiving and cold storage. However, in today's AI computing power surge, HDDs' performance shortcomings have become "fatal flaws": their mechanical structure dictates slow read/write speeds and high latency, completely unable to keep pace with GPUs' computing power release. In AI training, data must be rapidly loaded from storage to GPU memory, but HDDs' slow response causes "data to wait for computing power."

Thus, the core industry contradiction is clear: GPUs' "insatiable computing demand" sharply opposes existing storage solutions' "limited adaptability." HBM solves "speed" but not "scale" or "cost"; HDDs solve "scale" and "cost" but not "speed." The sustained growth of the AI industry demands a storage solution that balances "high-speed responsiveness, massive capacity, and reasonable costs."

SSD value is being highlighted amid this contradiction.

02

Why Are AI-Oriented SSDs "Going Viral"?

What problems must AI-oriented SSDs solve?

Industry insiders told Semiconductor Industry Insights that AI-oriented SSDs are custom-built for large-scale model training/inference, offering "high performance + high concurrency + low latency + high endurance + massive capacity." In contrast, general-purpose high-capacity SSDs merely offer "large capacity"—a necessary but insufficient condition. Below are key characteristics of these SSDs:

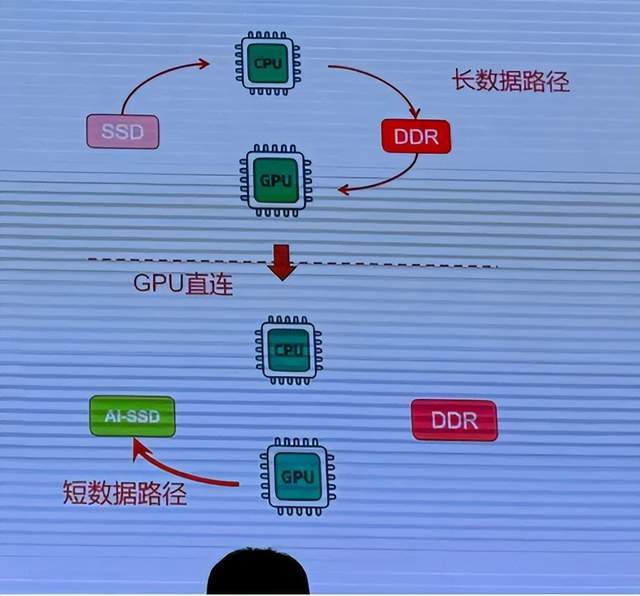

Breaking CPU bottlenecks to prevent high-end GPU computing power from idling. GPUs' core value lies in computing output, but this output's effectiveness hinges on data transfer and storage collaboration. Traditional architectures require GPU data retrieval to pass through multiple stages: "SSD → CPU → memory → GPU," with CPU bandwidth bottlenecks becoming an industry pain point. AI-oriented SSDs' core breakthrough lies in semiconductor-level "direct collaboration," using interface technology to enable GPUs to bypass CPUs and establish direct data channels with SSDs. This change is far more than a speed boost—data transfer times are drastically reduced, eliminating GPU idle time due to data waits and resolving resource waste from high-end GPU computing power idling, fully unlocking core chips' performance advantages.

Breaking GPU memory bottlenecks. Training and inference of trillion-parameter models require terabyte-scale memory. Relying solely on HBM expansion would double GPU costs and face semiconductor manufacturing limitations, making high-end GPU cluster investments untenable for most enterprises. AI-oriented SSDs are designed as a "memory-like layer" between HBM and traditional storage, representing a collaborative innovation between semiconductor storage and computing devices. They serve as both GPU extended memory and data caching, not replacing HBM/DRAM but expanding storage layers from memory to SSDs, forming a "DRAM + HBM + SSD" hierarchical storage system to optimize overall efficiency.

Built-in DSP/ASIC for near-storage computing. GPUs handle both high-end tasks like core matrix operations and simpler tasks like data preprocessing and optimizer state updates, wasting valuable computing power. AI-optimized SSDs incorporate DSP/ASIC computing units supporting near-storage computing, offloading these simple tasks from GPUs to SSDs for local execution, achieving "task optimization" across semiconductor devices. This collaborative model frees GPUs from redundant computing, enabling them to focus on core computing output. It reduces delays and losses from data transfers while increasing computing density across the semiconductor system.

Industry insiders told Semiconductor Industry Insights: AI-optimized SSDs, for the first time, integrate storage in essence (fundamentally) into the computing system, enabling direct data participation in AI training and inference. They perfectly match GPUs' high-frequency concurrency characteristics, ultimately improving performance and reducing overall TCO (Total Cost of Ownership).

03

SSD Shortages Emerge as 2026 Sees Mass Adoption

AI servers' explosive storage demand has caused severe HDD shortages, with delivery lead times extending beyond two years. Cloud providers are "urgently placing additional orders" for high-capacity enterprise SSDs, with some manufacturers' 2026 QLC NAND Flash capacity already fully booked. Supply chain sources reveal that cloud providers must wait in line; due to concentrated HDD supply and "order-based production" models, shortages worsen. Some cloud providers have signed 2026 long-term agreements with suppliers to secure HDD and enterprise SSD supplies.

Thus, AI-era SSDs have become a must-win track (sector) for storage giants, GPU leaders, and cloud providers. Global leading storage manufacturers are entering the fray, developing two differentiated technical routes.

The first route involves deep collaboration with GPU leader NVIDIA to develop AI/data center-optimized SSDs. The core goal is addressing GPU limitations imposed by HBM capacity, aiming to handle the shift from "compute-intensive" to "data-intensive" workloads. By placing more data near computing resources, these SSDs expand GPUs' usable memory space, supporting larger dataset access and significantly boosting GPU utilization.

Under this technical direction, Kioxia and SK Hynix have announced collaboration progress. In March 2026, Kioxia unveiled a new ultra-high IOPS SSD category developed for NVIDIA's "Storage-Next" initiative, with evaluation samples expected by late 2026. Similarly, SK Hynix announced in December 2025 its collaboration with NVIDIA on AI-core SSDs, internally codenamed "AI-NP" (AI NAND Performance) under its "AI-N Family" product line. The core logic involves reconstructing NAND and controller architectures to break data transfer bottlenecks between AI computing and storage, meeting massive AI inference's extreme data throughput demands. SK Hynix's product will use a PCIe Gen6 interface, with planned initial samples by late 2026 achieving 25 million IOPS—an 8–10x leap.

The second route focuses on capacity and performance breakthroughs to create high-performance, high-capacity SSDs. Samsung, Huawei, and Micron are accelerating layout (layouts) in this sector. In October 2025, Samsung outlined its product roadmap: a 256TB PCIe 6.0 SSD launching in 2026 and a 512TB version in 2027, alongside CMM-D storage products compatible with CXL 3.1 and PCIe 6.0 standards, offering doubled performance.

Huawei preemptively launched its AI-era high-end SSD matrix in August 2025, including high-performance HUAWEI OceanDisk EX560 and SP560 series and high-capacity HUAWEI OceanDisk LC560 series, with single-disk capacities up to 245TB. These products break traditional AI storage performance and capacity bottlenecks, comprehensively improve AI training efficiency and inference experiences.

Concurrently, Micron unveiled three G9 NAND-based data center SSDs in Boise, Idaho, in August 2025: flagship 9650, high-density 6600 ION, and mainstream 7600 series. Leveraging global-first PCIe 6.0 technology, industry-leading capacity density, and ultra-low latency, these SSDs support AI computing infrastructure. Micron's 9650 and 7600 series now offer E3.S/E1.S samples, while the 6600 ION 122TB version entered mass production in Q4 2025, with a 245TB high-capacity version planned for H1 2026.

From these leading manufacturers' technical layout s and product roadmaps, 2026 is clearly a pivotal year for AI SSD technology commercialization.

Industry insiders told Semiconductor Industry Insights that AI SSDs now demonstrate strong practical value in three core AI scenarios:

1. AI inference systems. Whether ChatGPT-style chatbots or workplace AI functions, they require high-frequency KV cache access to handle millions of concurrent requests. SSDs' low-latency, high-responsiveness enables efficient inference, while massive capacity grants AI "long-term memory," avoiding redundant computations and drastically reducing costs.

2. Real-time vector database retrieval. Vector databases underpin AI semantic search and recommendation systems, demanding extreme throughput and response times. SSDs' high concurrency and low latency double real-time retrieval efficiency.

3. AI data appliances. For massive-data training scenarios, these appliances must balance performance and cost. SSDs optimize performance and TCO, enabling more rational data tiering that ensures training speed while reducing hardware costs, making them ideal for enterprise AI training platform deployment.

04

AI-Oriented SSDs Enter Industry Boom

Samsung and SK Hynix, which long prioritized DRAM investments, are now actively adjusting strategies to address storage chip market shifts driven by surging AI server demand.

Samsung Electronics initiated 280-layer V9 NAND mass production in September 2024 but initially deployed only early production lines in its Pyeongtaek campus, with monthly capacity around 15,000 wafers. With AI-driven storage demand rising, Samsung is accelerating V9 capacity expansion, focusing on its X2 production line in Xi'an, China. Samsung's Xi'an NAND fab recently completed process upgrades, achieving mass production of 236-layer eighth-generation V-NAND (V8 NAND).

This process upgrade, begun in 2024, aimed to transform existing V6 (128L) NAND to boost product performance, production efficiency, and capacity competitiveness. After V8 NAND mass production, Samsung's Xi'an fab targets 286-layer V9 NAND, with related production lines at X2 factory planning transition and mass production within 2026.

SK Hynix also shows strong expansion momentum. The company plans to initiate 321-layer ninth-generation NAND conversion investments in Q2 this year, targeting ~30,000 wafer/month V9 capacity at its Cheongju M15 fab. Compared to current ~20,000 wafer/month levels, this expansion is substantial.

Kioxia plans to double capacity by FY2029 (vs. FY2024) by expanding production lines at its Yokkaichi and Kitakami plants to meet growing AI data center NAND flash demand. Additionally, Kioxia and SanDisk plan a joint NAND fab in the U.S.

Technical iteration hinges not just on architectures and standards but also on underlying storage media optimization. Kioxia CEO Nobuo Hayasaka stated that QLC SSDs are the AI industry's best choice. While SSD performance declined from SLC to MLC, TLC, and finally QLC, by 2025 QLC SSD speeds surpassed 2017 TLC SSDs. Today's QLC SSDs offer ~7000MB/s sequential read/write speeds, powerful enough for AI large model data storage and retrieval.

QLC (Quad-Level Cell) NAND has become the mainstream SSD choice by precisely matching AI scenarios' core demands:

1. Read-optimized: QLC NAND is optimized for read-intensive workloads, while AI inference servers primarily analyze and process large datasets with read-heavy, low-write access patterns.

2. High density: QLC NAND offers higher storage density at lower cost/unit than TLC NAND, making it ideal for AI servers, cloud computing, and big data analytics.

3. Energy efficiency: Solidigm research shows QLC SSDs are 19.5% more energy-efficient than TLC SSDs and 79.5% more efficient than hybrid TLC SSD+HDD solutions—critical for large-scale AI inference server deployments.

Intel research further confirms QLC NAND SSDs saturate PCIe 4.0 bus read capacity with near-TLC latency and QoS, offering response speeds orders of magnitude faster than HDDs.