AI Smartly Captures Cloud Computing

![]() 08/29 2025

08/29 2025

![]() 774

774

Author | Hao Xin

Editor | Wang Pan

Large models have opened a window of growth for AI clouds, and the cloud computing market is undergoing profound transformations at this pivotal juncture.

Cloud computing is gradually evolving from "resource-oriented cloud services" to "resource+development-oriented cloud services." In the past, cloud computing focused mainly on resources, with the primary objective of addressing resource bottlenecks. However, merely providing underlying resources is no longer sufficient. Cloud providers must now offer not just computing power but also transform the cloud into an intelligent development and runtime environment.

As Shen Dou, Executive Vice President of Baidu Group and President of Baidu Intelligent Cloud Business Group, emphasized at the 2025 Baidu Cloud Intelligence Conference: "Enterprises' demands for infrastructure have shifted from 'cost reduction and efficiency enhancement' to 'direct value creation.' All intelligence generated by computation will be encapsulated into Agents, participating in value creation and delivery. An enterprise's AI cloud will no longer serve as a cost center but will become a new profit center."

Customers are migrating to the cloud not just to 'save money' but to leverage AI capabilities to solve business challenges, create product services, enhance user experience, and explore new business models.

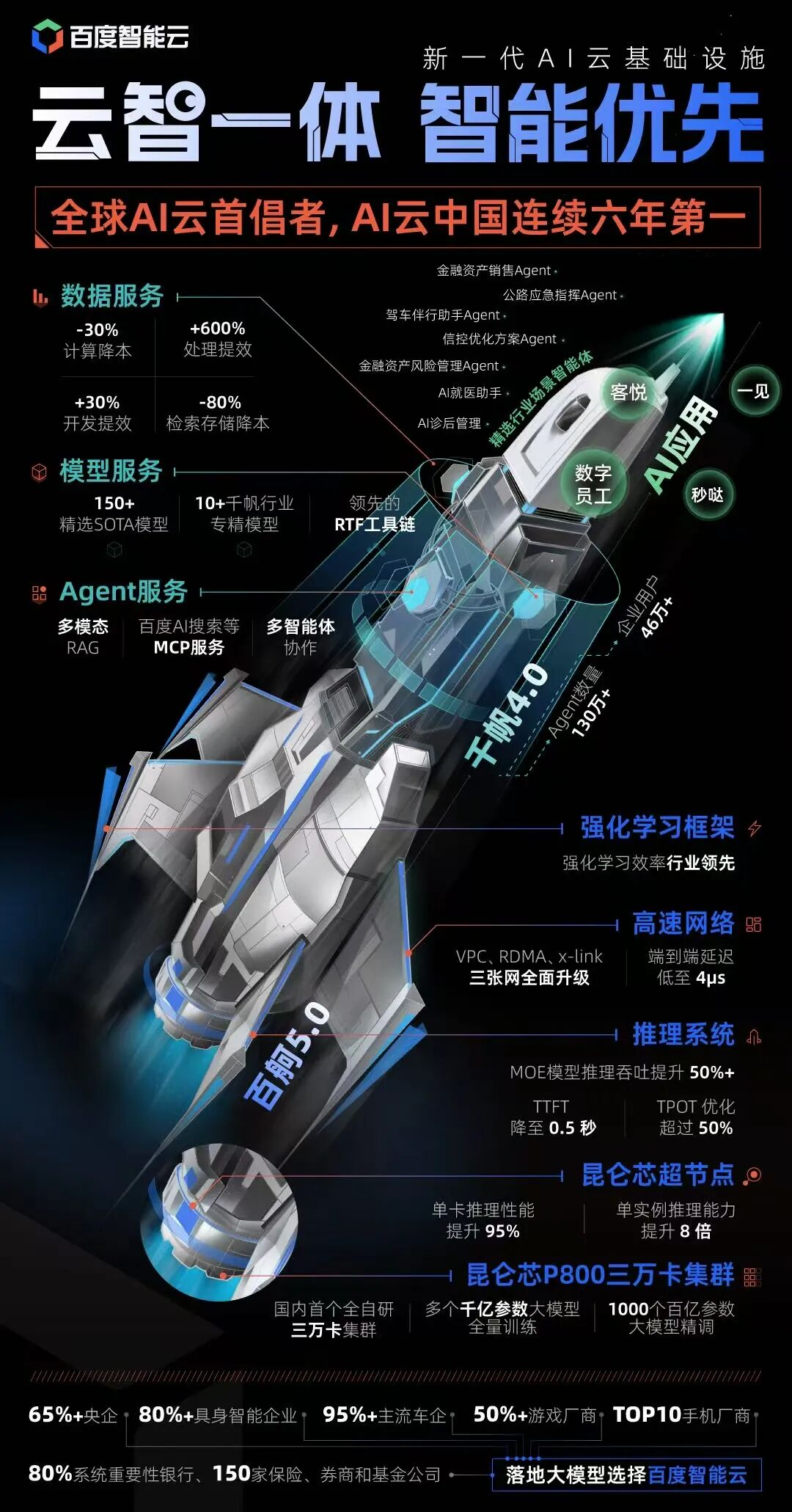

Taking Baidu Qianfan, the enterprise-grade AI development platform, as an example, there are currently over 460,000 enterprise users with over 1.3 million Agents developed.

Change fuels progress, and progress ensures longevity. As new opportunities arise in cloud computing, another round of competition has begun, with vendors successfully shifting to the AI track leading the race.

IDC reports indicate that in the Chinese AI public cloud market in 2024, Baidu Intelligent Cloud accounted for a 24.6% market share, ranking first for six consecutive years. In markets for AI public cloud services such as conversational AI, intelligent speech, and natural language processing, Baidu Intelligent Cloud ranked among the top two.

After years, the domestic cloud computing market has re-entered a shuffling cycle, and Game Changers are on the horizon.

A Cloud Born for AI

Since the inception of the "Cloud Intelligence Integration" strategy and architecture, Baidu Intelligent Cloud has been a cloud designed specifically for AI.

At the end of 2022, generative AI and large model technologies, epitomized by ChatGPT, exploded globally. Baidu Intelligent Cloud swiftly followed suit, releasing the domestic large model series, Wenxin Large Model, in March 2023.

By fully embracing large models and AI, Baidu Intelligent Cloud once again stands at the forefront of AI technological evolution. Leveraging its full-stack layout optimization in key elements, it has pioneered the transition from the "Cloud Intelligence Integration" architecture to "AI-First," empowering intelligent reconstruction across industries with large models at the core.

'In the era of the intelligent economy, there must be new infrastructure to support it, and that is the AI-First cloud.'

Based on this, at the 2025 Baidu Cloud Intelligence Conference, Baidu Intelligent Cloud's intelligent infrastructure underwent a significant upgrade.

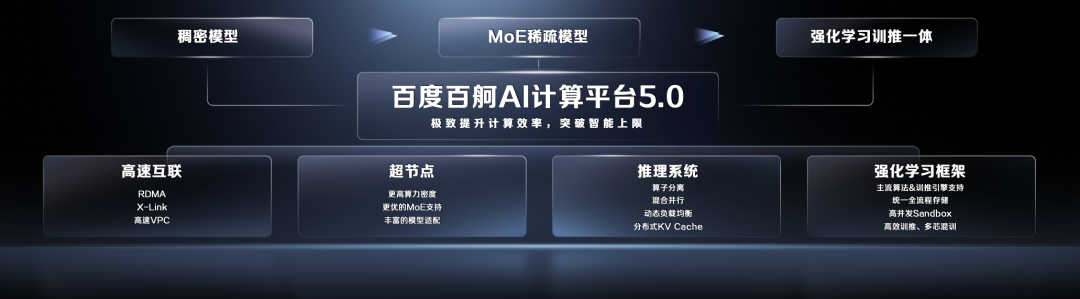

In AI computing, Baidu Baige AI Computing Platform released version 5.0, creating an 'intelligent factory' for AI training and inference. It achieves extreme optimization and upgrades in computing power, inference, and reinforcement learning, enabling large models to not only run but also operate swiftly, efficiently, and intelligently.

In networking, faster communication and lower latency enhance model training and inference efficiency; in computing power, Kunlun Core super-nodes are launched on public cloud services, making supercomputing power officially accessible; in the inference system, through the three core strategies of 'decoupling,' 'adaptive,' and 'intelligent scheduling,' throughput is increased, and latency is reduced; in integrated training and inference, the Baige reinforcement learning framework is released, maximizing the extraction of computing resources and improving training and inference efficiency.

Currently, the largest open-source model parameter in the industry has reached 1 trillion. With Kunlun Core super-nodes, anyone can easily run it in just a few minutes using a single cloud instance.

Above computing, equally important is AI development. On that day, Baidu Intelligent Cloud Qianfan was upgraded to version 4.0, serving as both a centralized output for Baidu's AI capabilities and evolving into a one-stop operating system for enterprises to build Agents and implement large model applications.

In terms of models, it provides over 150 models, including deep reasoning, visual understanding, visual generation, speech, and more, ensuring that enterprises and developers can access state-of-the-art (SOTA) models promptly. The recently launched Baidu self-developed video generation model, Baidu Steam Engine, is now fully integrated into the Qianfan 4.0 platform. Additionally, the platform has released a series of self-developed industry-specific models, including Qianfan Huijin financial industry models and Qianfan VL visual understanding models (Qianfan-VL series), achieving results on specific tasks that surpass models with over 100 billion parameters using only 10 billion parameters.

Addressing the challenging issue of reinforcement training for enterprises, Qianfan introduces reinforcement learning and feedback mechanisms, enabling models to be optimized through 'trial and error + feedback' based on less data (just a few hundred pieces), precisely aligning with business goals. This means that small and medium-sized enterprises and traditional industries can quickly obtain 'exclusive models' tailored to their own businesses without requiring large annotation teams or data accumulation.

While large models are powerful, they lack the 'memory' and understanding of internal enterprise knowledge, leading to 'hallucinations' or irrelevant answers. How to accurately utilize the vast amount of data accumulated by enterprises is the key to the practical application of intelligent Agents.

The Qianfan Agent service platform in version 4.0 has been upgraded to multi-modal RAG (Retrieval Augmented Generation), supporting not just traditional text retrieval but also extending to multi-modal content such as images, tables, PDFs, and structured data, comprehensively covering the diverse knowledge forms of enterprises. Simultaneously, MCP Servers such as Baidu AI Search have been newly opened, allowing more enterprises to benefit from Baidu's 25 years of search technology accumulation, becoming 'essential plugins' in Agent development.

Based on the upgraded computing power and development platform, Baidu Intelligent Cloud has launched a series of 'out-of-the-box' Agent applications, such as the digital employee 'AI Wu Yanzu' and the 'AI Master Craftsman' based on the Huiboxing digital human technology.

Baidu Intelligent Cloud officially introduced the Yijian visual large model platform's Yijian·Process Compliance Analysis capability. By simply uploading a standard operation video, an SOP detection task can be generated in a few minutes, making Agents comparable to 'AI Master Craftsmen,' solving the practical problem of insufficient experienced craftsmen and difficulty in transmitting experience in industrial production lines, and helping enterprises achieve cost reduction and efficiency enhancement through AI.

Collaborating with AI education customer Yashi Education, Baidu Intelligent Cloud developed the 'Wu Yanzu Digital English Coach,' leveraging Baidu's self-developed end-to-end speech and semantics large model, Huiboxing digital human capabilities, and a suite of AI technologies. From today, the Wu Yanzu digital human will officially serve as the first batch of recommendation officers for Baidu Intelligent Cloud digital employees.

Baidu's Choice

For a long time, the development of cloud computing has focused more on the 'cloud' itself.

That is, continuously stacking database, middleware, container, microservices, and other capability modules on top of basic resources, following a cloud computing development path centered on resources and driven by functional expansion. By building full-stack capabilities for AI computing, AI development, and AI applications, it meets the diverse needs of enterprises in the early stages of digital transformation.

Large models represent a turning point in the evolution of cloud computing, and the core issue facing enterprises is no longer 'whether there are cloud resources' but has shifted to 'using intelligence'—that is, how to truly leverage AI to create business value.

Traditional models have been unable to satisfy enterprises' deep demands for intelligence. Today's cloud must be built around AI-native principles, meaning that cloud platforms must not only provide computing power but also offer a comprehensive set of capabilities, including AI-oriented infrastructure, development toolchains, model services, data pipelines, and security assurance.

The transition from traditional cloud to AI cloud represents a 'critical leap,' with the core reason being that AI has fundamentally raised the bar for cloud computing.

New competitive factors have emerged under AI's influence, such as whether a company possesses self-developed or deeply adapted AI chips, whether it has a cloud-intelligence integrated architecture that meets AI cloud-native requirements, whether it possesses distributed training, inference acceleration, and large-scale cluster scheduling capabilities, whether it has built a full-process toolchain from data annotation, model training, fine-tuning, deployment to application development, and whether it has substantial funds for sustainable investment.

This has led to cloud computing competition being a game for top players, both in the past and present.

All past events are merely a prelude. Achieving a perfect leap is not an overnight process but is supported by forward-looking layouts and long-term technical accumulation.

Baidu Intelligent Cloud's forward-looking layout for AI cloud can be traced back to its inception. In its own words, 'From the first day of doing cloud, Baidu believed that AI would change cloud computing.'

In 2019, Baidu Intelligent Cloud constructed the 'Cloud Intelligence Integration' architecture to support Baidu's core businesses such as Baidu Search and Baidu Netdisk. In 2020, Baidu Intelligent Cloud officially proposed the development strategy of 'Cloud Intelligence Integration,' laying the foundation for the subsequent shift to the AI cloud track.

In 2022, Baidu Intelligent Cloud took the lead in building the largest GPU cluster in China at that time, transitioning from a CPU cloud service-oriented platform to a GPU cloud service-oriented platform.

The so-called 'integration' refers to achieving architectural synergy, capability integration, and end-to-end optimization across various levels of cloud computing.

A full-stack layout builds a systematic advantage. If only upper-layer applications are developed without deep optimization of underlying computing power and platform tools, performance, cost, and flexibility will be limited. Conversely, if only the computing power layer is developed without development and applications, cloud vendors can only stay at the level of 'selling resources' and fail to form differentiated value.

It is under this philosophy that Baidu Intelligent Cloud can reconstruct cloud services from an AI perspective when new opportunities arise. On the one hand, it swiftly completes a full-stack upgrade from computing power, data, models to applications, reshaping the architecture, service model, and business logic of cloud computing. On the other hand, it also drives the entire intelligent cloud market to make a comprehensive transition centered on AI.

Standing at a new development node, Baidu has been contemplating what truly constitutes AI cloud and how to genuinely achieve 'AI-First.'

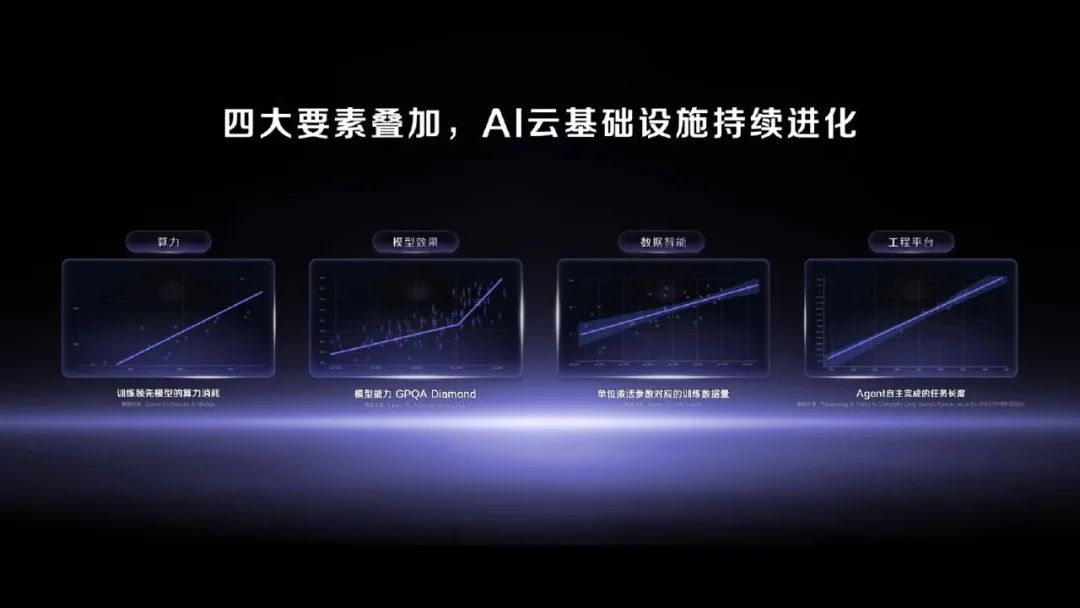

After repeated practice, Baidu Intelligent Cloud has concluded that computing power, models, data, and engineering capabilities constitute the four core elements of AI cloud.

Computing power scales continuously expand, data continuously provides raw materials, model intelligence continuously breaks through, and the engineering platform integrates the first three through powerful scheduling and orchestration capabilities, forming a unified and continuously evolving AI cloud infrastructure that supports the rapid growth of large model applications such as Agents.

Implementation is King

Since the advent of large models, Xin Zhou, General Manager of Baidu Intelligent Cloud's AI and Large Model Platform, has been at the forefront, keenly observing changes in customer needs over the past three years.

He stated, 'Last year and the year before, our main demand was for models to rank among the top three on the list and have parameter volumes reaching 100 billion. But this year, it has become very practical. Customers no longer care about whether the platform is the most advanced but require stable operation, model security, data security, etc.'

Customers' pursuit of stability and sustainability in business scenarios has also spurred AI cloud vendors to explore new business models of MaaS in the new era. For cloud vendors, implementation effect and commercial conversion are the only standards for testing hard power.

Bidding market data for the first half of 2025 reveals a surge in large model-related projects in China. Notably, Baidu Intelligent Cloud stands out, topping both the number of winning bids (48 projects) and the total winning bid amount (RMB 510 million). It maintains its leadership in key sectors such as finance, energy, government affairs, and manufacturing.

Specifically, Baidu Intelligent Cloud boasts an impressive clientele across various industry segments: over 65% of central enterprises, 80% of systemically important banks, over 150 insurance, securities, and fund companies, the top 10 mobile phone manufacturers, 95% of mainstream automakers, and over 50% of game manufacturers. In the emerging field of embodied intelligence, Baidu has secured a first-mover advantage, with 20 customers, four of which rank among the nation's top four.

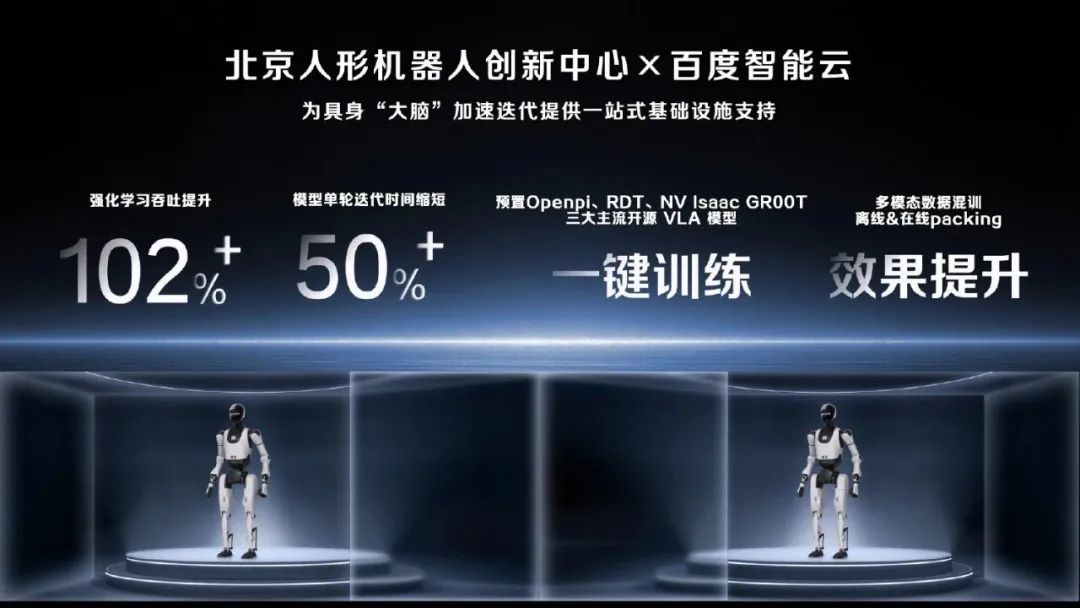

Humanoid robots, at the forefront of AI-physical world interaction, are experiencing exponential growth. However, the path to large-scale application of embodied intelligence is fraught with challenges, including the absence of a universal intelligent platform, limited multi-agent and multi-scenario adaptation capabilities, and a scarcity of high-quality cross-modal embodied intelligence data.

To tackle these issues, the Beijing Humanoid Robot Innovation Center has forged a deep partnership with Baidu. The Baige platform significantly enhances the development efficiency and iteration speed of embodied intelligence large models. It empowers research and development teams to swiftly verify algorithms, optimize strategies, and deploy applications. Additionally, it provides robust computing power support for parallel experiments and model optimization across multiple robot agents and multi-task scenarios.

Similarly, Glintsight chose Baidu Intelligent Cloud over several vendors. Initially relying on an open-source solution for vision-language multimodal (VLM) large model training, Glintsight faced significant challenges with computing power scheduling and utilization efficiency, characterized by uneven token arrangement and severe computing power fragmentation.

Drawing on Baidu's extensive experience in ultra-large-scale model training, the Baige platform offered Glintsight an optimized training framework and intelligent computing power scheduling strategy. Post-optimization, the time required for VLM pre-training was drastically reduced, with tasks that previously took a week now completed in approximately two days, resulting in an overall training efficiency improvement of approximately three times.

Glintsight shared that they had initially presented similar requirements to other cloud vendors but were ultimately impressed by the services provided by Baige.

"Baige offers closely integrated services. Any request made in the work communication group receives an immediate response. We also have a 7x24-hour service group that addresses every matter promptly, which is invaluable for swiftly advancing goals at different stages and achieving high efficiency."

The continuous industry implementation is evident in the financial report data.

In the first half of 2025, Baidu reported a total revenue of 65.2 billion yuan, with a net profit of 15 billion yuan attributable to its core business, marking a 40% year-on-year increase. Notably, AI new business revenue reached 19.4 billion yuan, up 36% year-on-year.

Specifically in AI cloud, Baidu Intelligent Cloud's revenue increased by 27% year-on-year to 6.5 billion yuan in the second quarter. For the first half of 2025, the revenue grew by 34% year-on-year, surpassing the low double-digit growth rate recorded in the first half of 2024.

Conclusion:

As we enter 2025, the popularity of DeepSeek has intensified competition among cloud vendors for AI cloud mindshare.

AI is reshaping cloud computing and emerging as the primary growth engine. Baidu Intelligent Cloud, for instance, offers enterprises end-to-end AI cloud services, spanning from computing power to models and from development to implementation, through its Baige (AI infrastructure), Qianfan large model platform (model and application development), and industry solutions, driving the overall cloud business towards high-quality growth.

The industry widely observes that "AI Cloud" has become a crucial area for cloud service providers to differentiate and establish barriers in the future.

Historically, cloud vendor competition focused on factors such as server count, pricing, and geographical coverage. However, future cloud computing competition will transcend these single dimensions, encompassing a multitude of factors including technological prowess, industry understanding, AI capabilities, ecosystem scale, business models, security compliance, and more.

As entry barriers continue to rise, the field will narrow down to a few key players. Those who can swiftly accumulate comprehensive strength following the current explosion of single-point capabilities are poised to dominate the next round of competition.

While traditional cloud vendors remain hesitant, AI cloud-native players have embarked on a new growth trajectory. It appears that the Game Changer for cloud computing is indeed upon us.