Terafab Interpretation: Computing Power is Escaping Earth

![]() 04/10 2026

04/10 2026

![]() 573

573

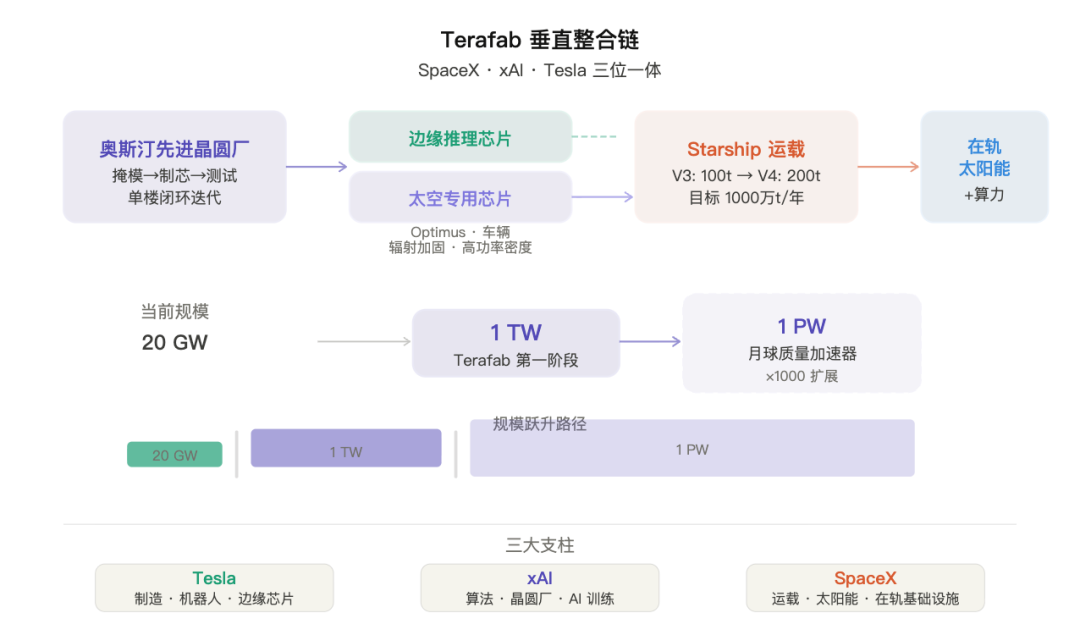

Recently, Musk delivered a keynote speech in Austin, announcing what he called the 'most ambitious chip construction project in history': Terafab—a manufacturing system targeting an annual production capacity of 1 terawatt (TW) of AI computing power, jointly advanced by SpaceX, xAI, and Tesla.

This article provides an interpretation of Musk's Terafab project. After reading, you will gain three insights:

Why Earth-based computing power expansion has hit a physical ceiling;

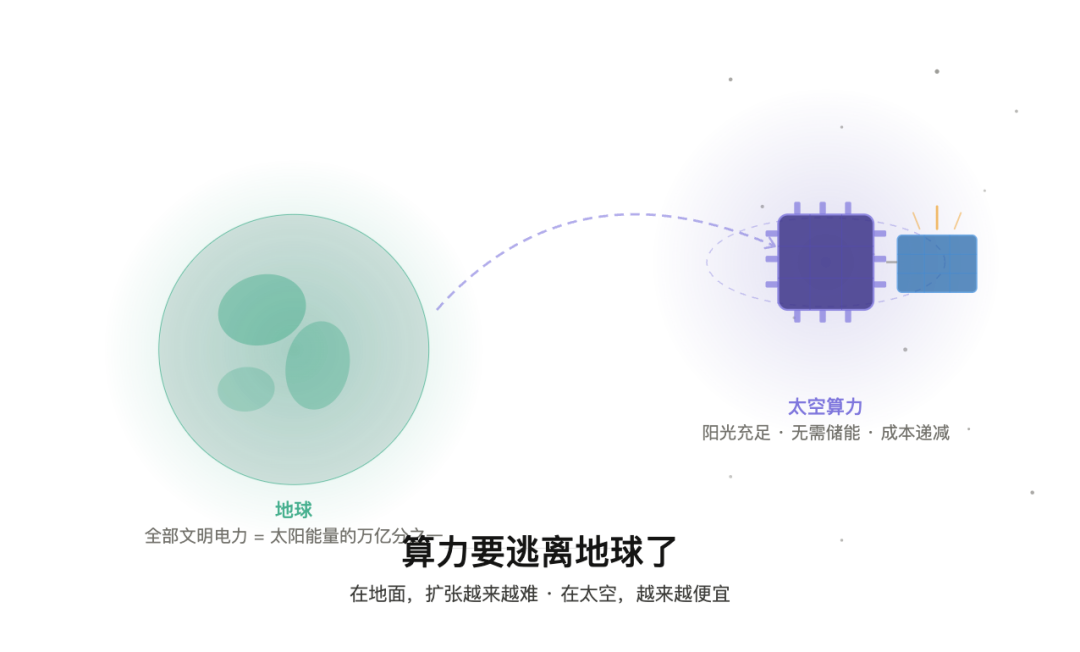

Why space is the inevitable destination for the next generation of AI infrastructure;

What this means for practitioners and decision-makers in the chip, AI, and aerospace industries.

1. Terafab is Not Just a Bigger Chip Factory

Terafab is often misunderstood by the media as 'Musk building his own chips.' This interpretation leads to incorrect strategic judgments.

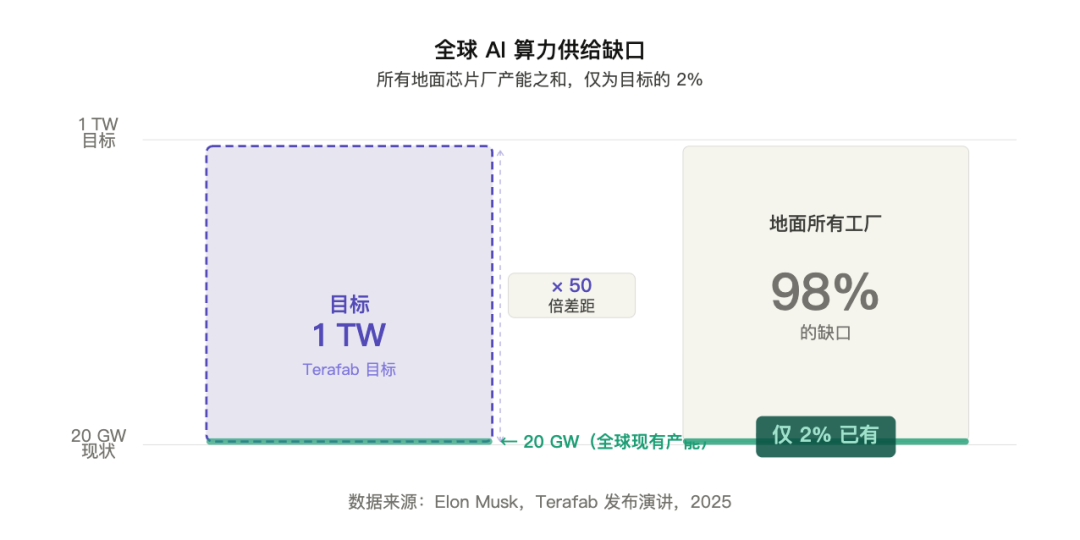

What Terafab truly addresses is a more fundamental issue: there is a structural gap in global AI computing power supply on the order of magnitude, which cannot be filled by the existing system.

Musk provided a figure: the current global annual AI chip production capacity is about 20 gigawatts (GW). Summing up the capacities of all wafer fabs on Earth, it amounts to only about 2% of Terafab's target. He explicitly stated that he had approached Samsung, TSMC, and Micron, saying, 'Whatever you can produce, we'll buy it all.' However, the maximum expansion speed of these manufacturers is far below demand.

This is not a commercial negotiation issue but a physical constraint problem—ground-based energy supply, land, water resources, and grid load are all forming ceilings. Therefore, Terafab's logical starting point is not 'I want to build my own chips' but 'the only way out is to redefine the physical foundation of computing power.'

2. The Marginal Cost Curve of Ground Expansion is Bending Upwards

Over the past decade, the paradigm for AI infrastructure construction has been: find more land, build larger data centers, and purchase more power quotas. This paradigm is now failing.

The failure is not due to weak demand but because the marginal costs on the supply side are accelerating: in terms of power, the best global renewable energy sites are already occupied; in terms of land and cooling, data center locations are becoming increasingly scarce; resident opposition (NIMBY effect) is only growing stronger.

The implication for the industry is that competing for computing power resources on the ground is an involution in a battlefield with increasing marginal costs.

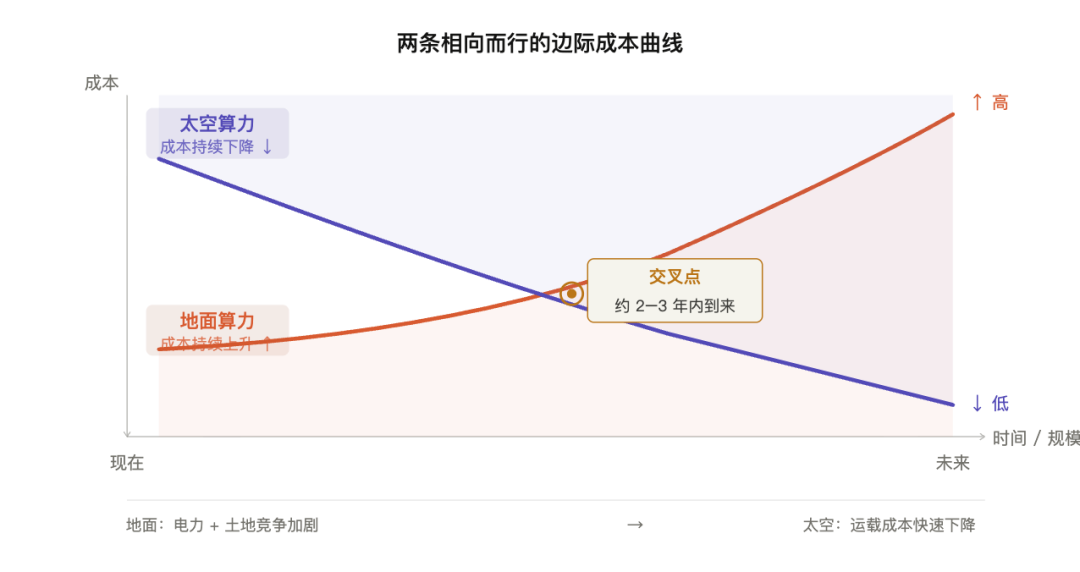

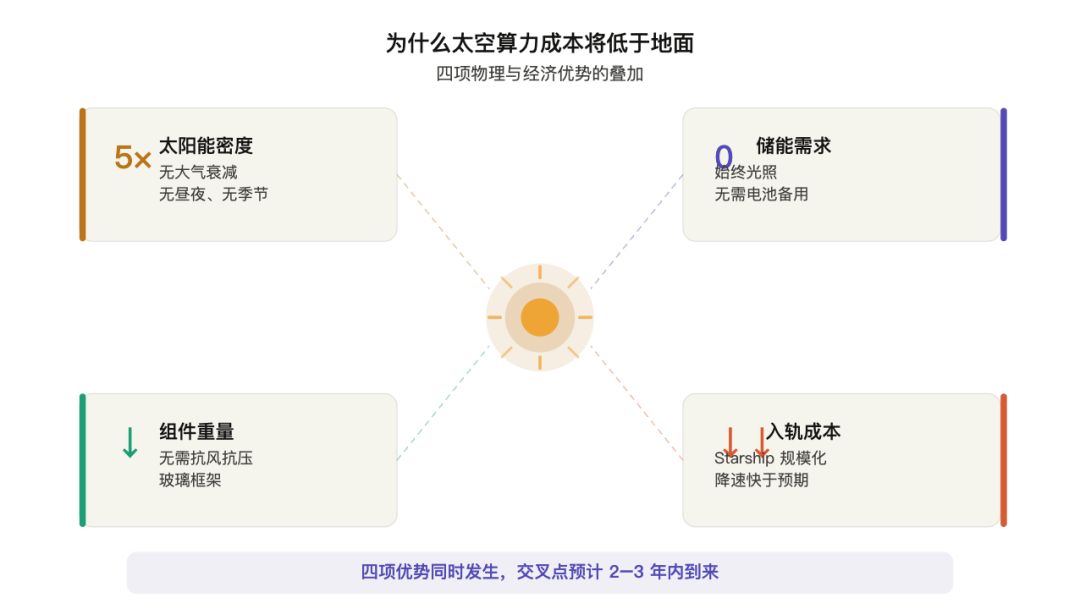

3. Surprise: In 2–3 Years, the Comprehensive Cost of Space Computing Power Will Be Lower Than Ground-Based

This is the most underestimated judgment in the entire speech and the most counterintuitive finding worthy of industry reflection. Musk said he believes that within two to three years, the comprehensive cost of deploying AI chips in space will be lower than deploying them on the ground. This sounds like science fiction, but it is based on the superposition of several physical and economic facts:

The solar energy density difference is over 5x. In orbit, solar panels always face the sun directly, with no atmospheric loss, resulting in an effective power density over 5 times higher than on the ground.

Space solar panels do not require heavy glass and metal frames. Without extreme weather, components can be made extremely lightweight, significantly increasing power generation per unit weight.

No need for battery energy storage. With reasonable orbital design, satellites can maintain nearly continuous sunlight, greatly reducing energy storage needs.

Launch costs are rapidly declining, with a clear path forward. Starship V3 has achieved a 100-ton orbital payload capacity, with V4 targeting 200 tons and an annual orbital capacity goal of 10 million tons.

These four factors are not independent; they occur simultaneously and all point in the same direction. Musk estimates that this crossover point will arrive in 2-3 years. Even if a conservative estimate pushes it back to 5 years, the logic still holds.

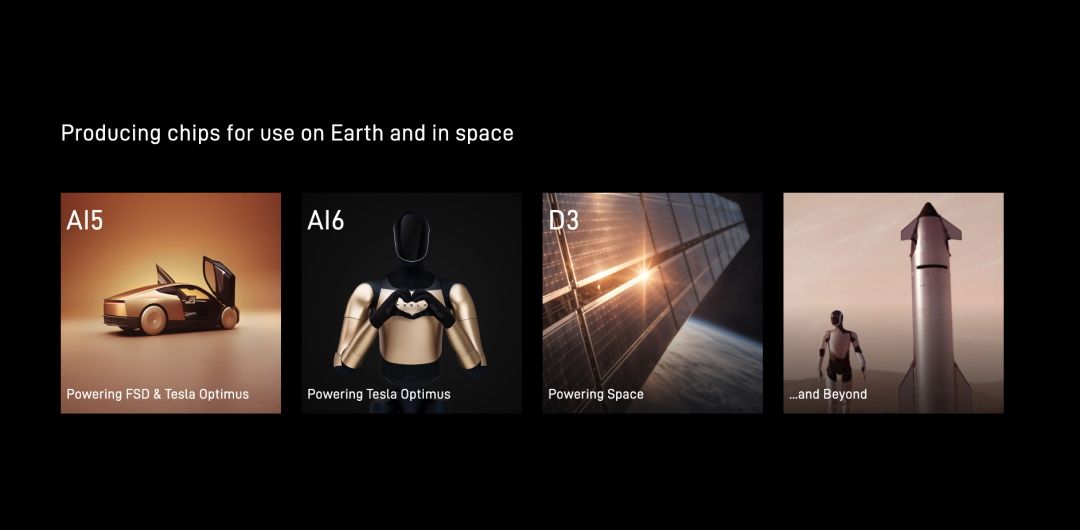

4. The Bigger Picture of Terafab: A Complete Vertical Integration Chain

To understand Terafab, it must be viewed within a complete system:

Ground segment: Austin Advanced Technology Wafer Fab. Integrating photolithography mask manufacturing, chip fabrication, and packaging/testing capabilities within a single building, the entire recursive loop from design changes to next-generation chip verification is completed in the same building, with an iteration speed about an order of magnitude faster than industry norms.

Chip directions: Two distinct product categories. One is edge inference chips, primarily for Optimus humanoid robots and Tesla vehicles—Musk predicts annual humanoid robot production will reach 1 to 10 billion units, 10 to 100 times the automotive market. The other is space-dedicated high-power chips, optimized for radiation hardening and space thermal management.

Next step: Lunar Mass Accelerator. Leveraging the Moon's lack of atmosphere and 1/6th Earth gravity, payloads can be accelerated directly to escape velocity without rocket engines. This step will expand computing power scale from terawatts (TW) to petawatts (PW), increasing it by another 1000x.

5. Decision Framework: Three Types of Readers Take Away One Conclusion Each

Decision-makers: In the next three to five years, the main battlefield of the computing power war will shift from 'who can secure more ground-based power and land quotas' to 'who can establish space computing power deployment capabilities the fastest.' Strategies betting on 'continuing to stack volume on existing ground infrastructure' now have diminishing marginal returns.

Practitioners: The design constraints for space-dedicated chips are entirely different from ground-based ones—radiation hardening, thermal management (primarily radiative rather than convective), extreme power density, lightweighting—these directions are becoming genuine large-scale demands. If you are involved in chip design or systems engineering, the hardware constraints of the space environment are worth studying in advance.

Researchers: Musk explicitly mentioned 'trying some crazy things' in the new fab—novel computing principles that push physical limits. When you have a platform capable of rapid iteration from mask to testing within a single building, the engineering validation cycle for non-traditional computing paths will be significantly compressed. This represents a genuine research opportunity window.

The essence of this speech is the declaration of a resource reallocation: the growth ceiling for computing power lies not in human engineering capabilities but in Earth's physical constraints. The path to bypassing these constraints is not continued involution on the ground but moving infrastructure to where constraints do not exist.

On the ground, expansion is becoming increasingly difficult and expensive; in space, expansion is becoming easier and cheaper. The crossover point of these two curves is arriving faster than most people expect.

References and Images

Musk's Terafab Speech

*Unauthorized reproduction or excerpting is strictly prohibited-