New Chinese Translation for Token: 'Symbol Unit' - Exploring the Essence of Token from Seven Perspectives

![]() 04/02 2026

04/02 2026

![]() 425

425

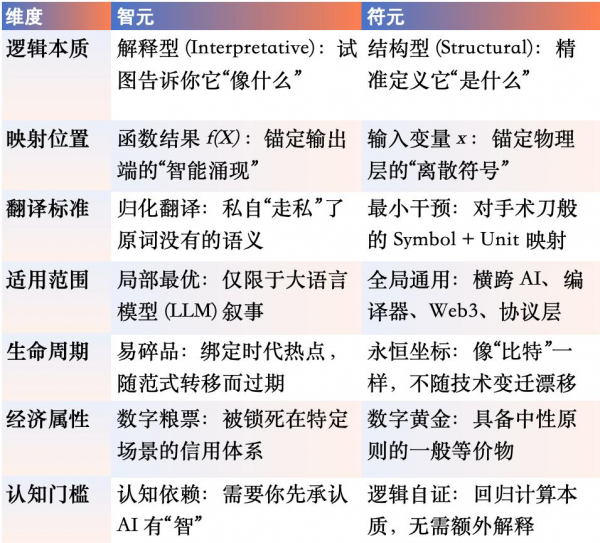

Recently, a heated debate over the translation of Token has emerged on the Chinese-language internet.

This is especially true with the introduction of the term 'Zhiyuan' ( Wisdom Origin ), which, endorsed by influential figuresoch like Wang Xiauan and a host of academic experts, quickly formed a kind of 'consensus illusion.' Many feel: This is it, how sophisticated, how fitting for the AI era!

But I must throw cold water on this: 'Zhiyuan' is a beautiful mistake.

It is essentially a highly logically packaged 'cognitive proposal' rather than a truly implementable, era-spanning 'standard definition.' While the industry is busy painting Token with the colors of 'intelligence,' we seem to have forgotten that Token was born in Shannon's probability space, grounded in Turing's symbol manipulation, and realized through probabilistic modeling in modern computing.

After a deep exploration across seven dimensions—information theory, translation studies, linguistics, computer science, computational complexity, cognitive science, and economics—I formally propose: The standard Chinese translation for Token should be—「Symbol Unit」

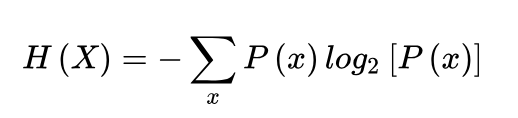

I. Information Theory Dimension: Shannon's Ghost and the Truth of Probability

To discuss the true name of Token, we must go back to 1948, to the origin of Claude Shannon's information theory.

1. Fundamental Logic: Is it Variable X or Functional Result f(X)?

At the most fundamental level of information theory, the formula for information entropy defines the elimination of uncertainty:

Here, we must uncover a truth long obscured by marketing rhetoric:

X is the Symbol Space (Random Variable): It is the set of all possible 'Symbol Units' in large models. x is the Specific Symbol (Symbol Realization): That is, what we commonly refer to as Token. It is merely a discrete value within this space.

The Logic of Symbol Units: In large models, Token is a discrete symbolic unit that is encoded and participates in probabilistic modeling. It directly addresses the symbol itself—i.e., variable x.

Symbol → Sym Unit → Unit 「Symbol Unit」 is a direct physical mapping of the underlying structure of information theory.

The Fallacy of Zhiyuan: 'Intelligence' or 'Intellect' are high-level emergent properties resulting from large models processing information. Referring to Token as 'Zhiyuan' is equivalent to confusing the 'independent variable' with the 'dependent variable' at the definitional level.

2. Dimensional Reduction: Information Processing is Unrelated to 'Meaning'

Shannon, eighty years ago, provided the most ruthless definition: The essence of information is the elimination of uncertainty, but the process of information processing is unrelated to 'meaning.'

In the engineering practice of large models, the logic is extremely cold:

Input: Text is segmented into discrete sequences of symbols. Processing: Matrix operations handle the probabilistic distributions of symbols. Output: Probabilistic predictions for the next symbol are generated.

The so-called 'intelligence' is a statistical miracle resulting from billions of symbols stacked under ultra-large-scale (ultra-large-scale) parameters.

The Truth: 「Symbol Unit」 is the fundamental variable x at the input, while 「Zhiyuan」 is merely a cognitive illusion humans have about the functional result f(X).

We are in an era of cognitive dislocation: Shannon stripped 'meaning' from information eighty years ago, returning it to mathematics; yet today, we try to force 'intelligence' back into symbols, fabricating a false profundity.

Conclusion: Token belongs to the discrete values of the symbol space, not the fundamental unit of intelligence.

II. Translation Studies Dimension: Yan Fu's 'Faithfulness, Expressiveness, and Elegance' and Semantic 'Minimal Intervention'

In translation studies, the introduction of any neologism faces an audit. We must establish the orthodox status of 「Symbol Unit」 as the ultimate translation for Token through the dual verification of the 'Faithfulness, Expressiveness, and Elegance' classic standard and the 'Back-Translation Consistency Test.'

1. Ultimate Showdown of 'Faithfulness, Expressiveness, and Elegance'

Faithfulness (Accuracy): 「Symbol Unit」 achieves semantic minimal intervention. It translates with surgical precision, focusing solely on the physical attributes of the original term without any ulterior motives. It is a physical-level correspondence to Symbol (Symbol) + Unit (Unit). It completes a full mapping of Token's physical attributes without addition or subtraction. It represents extreme loyalty to the original meaning, the cornerstone for a term's longevity.

Expressiveness (Clarity): 「Symbol Unit」 possesses strong contextual resilience. Whether in NLP algorithms, code compilers, or Web3 protocols, 'Symbol Unit' seamlessly integrates. Examples: Symbol Unit consumption, Symbol Unit segmentation, Symbol Unit sequence. Its fluency across different technological contexts proves the universality of its underlying logic. A good translation must withstand repeated 'cross-language degradation tests.'

Elegance (Propriety): 'Elegance' does not refer to ornate language but to whether the translation adheres to Chinese technical word formation patterns and systemic aesthetics.

① Systemic Coherence: In the Chinese technical context, 'Unit' represents the most basic, indivisible unit (e.g., element, unit, metadata). 「Symbol Unit」 perfectly returns to this system.

② Aesthetic Benchmarking: It continue (continues) a cold, objective technological intuition. It is as concise as 'Bit' and as solid as 'Atom,' possessing a timeless industrial aesthetic.

2. Dimensional Reduction: Back-Translation Consistency Test

Back-Translation Verification A 「Symbol Unit」: Symbolic Unit / Symbol Unit. At the foundational level of computer science, Token's standard definition is: A sequence of characters treated as a discrete symbol. 「Symbol Unit」 perfectly aligns with the engineering truth.

We can see: 「Symbol Unit」 perfectly aligns with the engineering truth upon back-translation, achieving zero-deviation coupling between Chinese and English semantics.

Back-Translation Verification B 「Zhiyuan」: Intelligence Unit / Intellectual Element. In the international AI academic community, this term typically refers to 'intelligent hardware modules' or 'intelligence measurement units.' If you use it to refer to Token in a paper, peers will think you're discussing 'brain partitioning,' not data slicing.

We can see: Explanatory translations often undergo severe semantic drift during back-translation, preventing alignment with global technical standards.

Conclusion: The optimal translation must achieve semantic minimal intervention and pass the back-translation consistency test.

III. Linguistics Dimension: 'Zero Presupposition' in Word Formation Logic and Evolution Beyond Temporal Constraints

I believe we must dissect, from the perspectives of linguistic word formation roots and evolutionary patterns, why 「Symbol Unit」 is the sole ultimate evolutionary form of Token in the Chinese context.

1. Morphological Verification: From 'Symbol Etymology' to 'Formal Decoupling'

In computer science, Token's etymology consistently points to 'marker, symbol, credential.' At its core, it has always aligned with Symbolic AI (symbolic AI).

The Trap of 「Zhiyuan」: Its focus is on 'intelligence' ( wisdom ). This is substantially an 'adjective' with a strong viewpoint. It presupposes that Token must possess 'intelligent' attributes from the outset. This word formation is aggressive, forcibly defining the material's purpose.

The Restraint of 「Symbol Unit」: Its focus is on 'Symbol' ( talisman ). This is a neutral, objective physical description. It only describes what Token is (a symbol) without presupposing its use.

Excellent technological word formation should be 'zero presupposition.' Just as 'Bit' is not called 'Computation Unit' and 'Byte' is not called 'Storage Unit,' Token should not be saddled with 'intelligence.' 「Symbol Unit」 achieves a perfect decoupling of form and content, respecting the true nature of things.

2. Linguistic Evolutionary Patterns: Why 'Explanatory Vocabulary' is Destined to Expire

Observing the truly enduring terms in tech history (Byte, Bandwidth, Data), you'll notice a common feature: They only describe structure and never bind to temporal narratives.

The Cost of Strong Temporality: 「Zhiyuan」 binds to the 'Age of Intelligence,' and 「Model Unit」 binds to the 'Age of Large Models.' They emerge at the peak of public sentiment but are destined to become obsolete and ridiculous as paradigms shift. If large models fall out of favor or the definition of 'intelligence' drifts, these terms will instantly feel dated and absurd.

The Tension of Timelessness: 「Symbol Unit」 is a 'structural description.' No matter how AI evolves—from text to multimodal, from large models to embodied intelligence—what circulates at the base will always be discrete 'symbolic units.'

The Truth: 「Word Unit」 was designed for the 'Age of Language' but was forcibly dragged into the 'Age of Intelligence'; 「Zhiyuan」 is an expensive, time-bound slogan. Only 「Symbol Unit」 never tries to explain the future, so it will never become obsolete.

Conclusion: Structural naming is superior to explanatory naming; only timeless expressions endure.

IV. Computer Science Dimension: Cross-Domain 'Global Consistency' and Compilation Essence

We must uncover a fact deliberately ignored by marketers: Token predates large models by a significant margin. It is a core concept in foundational computer protocols, compilers, and formal languages.

If a term cannot stand independently outside the AI context, it cannot become a great foundational term.

1. Cross-Domain Consistency: Symbol Unit as the 'Universal Adapter' of the Computer World

A truly great technical term must maintain logical self-consistency and purity in any context. 「Symbol Unit」 is the ultimate answer for Token because it possesses the foundational attribute of 'universal adaptability.'

Token has never been an AI-exclusive patch; it is a ubiquitous foundational unit in computer science. 「Symbol Unit」 perfectly aligns with this cross-domain unity:

Lexical Analysis (Lexical Token): In compiler theory, it is the smallest symbol after code segmentation. Calling it 「Lexical Symbol Unit」 precisely restores its essence as the minimal component of programming languages.

Network Protocols (Access Token): In system security, it is a digital symbol representing permissions. Calling it 「Access Symbol Unit」 clearly defines its identity as a digital contractual credential.

Distributed Systems (Session Token): In state maintenance, it is a discrete unit identifying sessions. Calling it 「Session Symbol Unit」 aligns with its definition as a logical tracking unit.

Conclusion: 「Symbol Unit」 demonstrates extremely strong 'global compatibility.' It does not rely on any specific application scenario but directly anchors in the physical fact of computer science processing discrete data.

2. The Essence of Compilation Theory: Returning to the Physical Truth of 'Symbolic Units'

In the mother tongue of computer science, Token's core definition is extremely pure: It is the smallest identifiable discrete symbolic unit (Symbolic Unit).

Symbol: Corresponds to the physical form of information.

Unit: Corresponds to the discrete scale of computation.

The word formation logic of 「Symbol Unit」 is the most faithful Chinese mapping of Symbol + Unit. It introduces no additional semantic interference, presupposes no complex application background—it does only one thing: restore the most fundamental action of computers symbolizing the world. This restraint and rigor endow 「Symbol Unit」 with lasting vitality.

Conclusion: Token is a cross-system consistent symbolic unit, not an AI-exclusive concept.

V. Computational Complexity Dimension: The 'Tape Truth' of Turing Machines and the Ultimate Unit of Computation

1. Returning to Computational Origins: The Physical Facts on Turing Machine Tape

In the world of computational complexity, any complex algorithm—whether simple sorting or trillion-parameter large model inference—ultimately reduces to symbolic operations by a read-write head on a Turing machine tape.

The Physical Location of 「Symbol Unit」: In this most foundational mathematical model, every discrete unit on the tape awaiting processing is a Symbol (symbol).

The Purity of Definition: Whether this symbol ultimately represents a byte, a Chinese character, a pixel segment, or a term in logical reasoning, at the moment of computation, it is equally non-intelligent and purely physical. 「Symbol Unit」 precisely captures this physical fact.

2. The Essence of Computation: The Art of Symbolic Transformation

The essence of computation is the ordered transformation of a finite symbol set.

Computability Logic: All emergent intelligence is fundamentally the permutation and combination of symbols under specific spatiotemporal complexity constraints.

The Dominance of 「Symbol Unit」: It is the basic symbolic unit on the tape leading to Artificial General Intelligence (AGI). It cares not for the emotions or meanings behind symbols but only for their discreteness and operability as computational carriers. This cold perspective is the deepest respect for the essence of computation.

3. Highest Abstraction: The Ultimate Expression in the P vs. NP Context

For geeks studying computational complexity, 「Symbol Unit」 is the ultimate expression of computability.

Logical Height: If P = NP is eventually proven, it will be based on the unification of symbolic transformation logic at the complexity level.

Conclusion: 「Symbol Unit」 is the 'atom' of the digital world. Like 'Bit,' it is cold, physical, and transparent. It does not undertake the task of explaining eras because it is itself the foundational unit constituting all algorithmic eras. Any attempt to add extra adornments to the foundational definition is an usurpation of computational truth.

Conclusion: The essence of computation is symbolic transformation, and Token is precisely the basic unit of this process.

VI. Cognitive Science Dimension: The Cognitive Leap from 'Explanation Dependence' to 'Structural Self-Evidence'

We must analyze, from the cognitive mechanisms by which humans understand new concepts, why 「Symbol Unit」 possesses stronger cognitive stability and resistance to evolution.

1. The Cognitive Superiority of Structural Language

When processing new concepts, the human brain typically follows two paths: interpretative and structural.

「Symbol Unit」 belongs to typical structural language: It provides a foundational structure (Symbol + Unit). It does not rush to tell you what this thing does but first delivers a stable physical model to your brain.

Cognitive Advantage: This naming approach of 'structure first' triggers the Symbol Grounding mechanism in cognitive science. It establishes a clear, derivable logical origin in the user's mind, rather than a vague imagery.

2. Stability of the 'Cognitive Anchor': Structure Does Not Shift with Time

Cognitive science tells us: explanations become outdated, but structures do not.

Anti-Interference: Any vocabulary named through 'explanation' will collapse as the context of the explanation fades. If a translated name relies excessively on 'current intelligent manifestations,' then when the form of intelligence undergoes drastic changes, public cognition will descend into chaos.

Stability of the Symbolic Unit: As a structured description, the anchor that 'Symbolic Unit' establishes in the human mind is a 'discrete symbolic carrier.' Regardless of how AI evolves in the future, this physical structure will always exist. It does not engage with the era of interpretation, so it will never be abandoned by the times.

3. Self-Emergence: Returning the Initiative of Understanding to the Brain

The charm of the 'Symbolic Unit' lies in its 'semantic blank space.'

Logical Self-Evidence: It does not forcibly define 'it is intelligent,' but rather, by demonstrating its essence as a 'symbolic unit,' allows users to discover its immense potential during the process of understanding.

Inference: This cognitive process that emerges from the bottom up is deeper and more lasting than any imposed explanation. The 'Symbolic Unit' is not a passively accepted label but a cognitive cornerstone that inspires the brain to autonomously construct the logical edifice of AI.

Conclusion: Structural naming constructs stable cognitive anchors, while explanatory naming relies on contextual interpretation.

VII. Economic Dimension: The Neutral Principle of Universal Equivalents and the Underlying Credit of 'Digital Gold'

We must examine the essential nature of Token as a universal equivalent in the digital economy from the basic laws of economics.

1. The 'Neutral Principle' of Units of Measurement: Rejecting Semantic Inflation

In economics, the core credit of any unit that can serve as a measure of value comes from its impartiality.

Credit of the Symbolic Unit: As a purely structured unit, the 'Symbolic Unit' is only responsible for measurement, not for qualitative judgment. Just as 'meter' is only responsible for length, not for beauty or ugliness; 'gram' is only responsible for weight, not for value.

Risk Mitigation: If a unit of measurement forcibly binds a certain 'value presumption' (e.g., intelligence), then when it is used to handle low-value, non-intelligent tasks (e.g., data cleaning, format conversion, simple protocol handshakes), semantic inflation will inevitably occur.

Logical Point: Units of measurement must be impersonal; otherwise, they will lead to the collapse of credit in the digital economic system. The 'Symbolic Unit' ensures the purity of measurement, so that the 'weights and measures' of the AI world will never depreciate due to fluctuations in task attributes.

2. The 'Gold' of the AI World: Bearing Value, but Not Defining It

In the history of currency evolution, gold became the ultimate universal equivalent because of its extremely stable (neutral) chemical properties. It never declares what it is for, yet it can bear all values.

Universality of the Symbolic Unit: The 'Symbolic Unit' is the 'digital gold' of the AI era. It does not possess any value stance itself, but through the discrete combination of symbols, it can precisely map all values from a piece of text to an entire virtual world.

Circulation Power: Because the 'Symbolic Unit' only defines structure (Symbol + Unit), it can seamlessly circulate in the AI computing power market, Web3 rights confirmation protocols, and Agent collaboration systems. It does not require additional explanation costs; it is itself a consensus on the underlying logic.

3. The Game Between 'Digital Food Stamps' and 'Universal Currency'

Localized Lock-in: Any naming with explanatory connotations (e.g., Intelligence Unit, Model Unit) is essentially a form of 'digital food stamps.' Their utility is forcibly limited to the narrow application area of 'intelligence' or 'models.'

Globality of the Symbolic Unit: The 'Symbolic Unit' anchors the cross-temporal value of Token. It does not care whether you use it to generate poetry or drive industrial robots; it is only responsible for measuring the energy composed of discrete symbols that propels the advancement of digital civilization.

Conclusion: Units of measurement must remain neutral. Token can only be defined as a structural unit, not a unit of value judgment.

Standard Definition: Token = A discrete symbolic unit encoded for probabilistic modeling. Therefore, its optimal Chinese translation should directly map its structural essence—Symbol (Symbol) + Unit (Unit) = Symbolic Unit.

What we seek is not a name that fits the current narrative but an eternal coordinate that can be engraved on the Turing machine's paper tape. Token does not belong to 'intelligence'; it belongs to a more fundamental world—symbols. The human world is composed of atoms, while the AI world is composed of 'Symbolic Units.' This is not a simple naming exercise but a return to the essence of computation.