How Does Temporal Information Help Autonomous Driving Better Understand Traffic?

![]() 04/07 2026

04/07 2026

![]() 397

397

In autonomous driving technology, the term "temporal information" frequently arises. It refers to the system's ability to not only focus on the current instantaneous state but also integrate historical information and predict future trends when processing data.

This ability is akin to human memory and anticipation. When we see a ball rolling toward the center of the road, we do not perceive it as a stationary dot; instead, based on its trajectory over the past few seconds, we instinctively anticipate that a child might run out next.

In machine logic, temporal information translates this perception of time into mathematical vectors that algorithms can process, ensuring strict logical consistency across perception, prediction, and planning along the timeline.

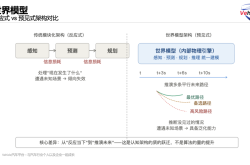

Evolution of Perception: From Static Slices to Dynamic Flows

Early autonomous driving algorithms primarily relied on single-frame images for object detection. While this approach performed adequately with static objects, it had inherent limitations in dynamic environments.

If the system only examined the current "snapshot," it struggled to accurately distinguish between a parked vehicle and one waiting at a red light, as their visual features in a single frame might appear identical.

By incorporating temporal information, the perception module shifts from "viewing photographs" to "watching videos." The system maintains a historical feature queue, recording the positional, velocity, and attitude changes of surrounding objects over time. By comparing displacements between adjacent frames, the system calculates real-time velocity and acceleration.

This continuous observation over time effectively filters out transient sensor noise, preventing objects from flickering or disappearing on the screen due to algorithmic fluctuations.

In complex urban environments, occlusion poses one of the greatest challenges for perception systems. A bicycle crossing the road might be blocked by a large bus for several seconds. Under pure single-frame perception, the system would assume the bicycle has vanished once occluded, potentially leading to delayed reactions after the bus moves away.

In contrast, systems with temporal modeling capabilities, such as those employing Bird's Eye View (BEV) architecture, store historically observed features in a "memory" space. Even if the object becomes invisible at the current moment, the model can infer its position using past features, maintaining its presence in the internal view.

Through this deep fusion of spatial and temporal features, the system not only "sees" the present but also "remembers" the past, enabling environmental completion at the perception level.

Temporal Information as the Logical Bridge Between Perception and Planning

Perception addresses environmental awareness, while temporal information provides essential input for subsequent trajectory prediction and decision-making planning. Trajectory prediction is fundamentally a process of inferring the future from the past. By analyzing an obstacle's movement patterns over the past 3 to 5 seconds, the prediction module generates multiple possible future paths and their probability distributions.

This "imagination" of the future is crucial for safe driving. Without temporal information, the planning module can only make decisions based on a static snapshot of the current moment, akin to driving blindfolded on a highway and only opening your eyes occasionally. This inevitably leads to sluggish reactions or abrupt maneuvers.

In practice, the prediction module processes time hierarchically. Short-term predictions focus on physical constraints, while medium- to long-term predictions incorporate road semantics and subject interactions. This causal reasoning based on temporal information enables autonomous vehicles to plan ahead.

For example, when the system predicts that a vehicle ahead might decelerate, the ego vehicle can smoothly release the accelerator rather than slamming on the brakes at the last moment. Temporal information ensures decision-making "foresight," making the vehicle's driving style more akin to that of an experienced human driver.

To maintain consistency in such forward-looking decisions, the system must also consider smooth temporal constraints when planning paths. This means the vehicle's trajectory equation must ensure not only coordinate continuity but also continuity and differentiability of velocity (first derivative) and acceleration (second derivative) over time.

If the planning algorithm generates an optimal solution independently at each millisecond without referencing previous instructions, the vehicle would exhibit lateral swaying or frequent bumping.

By introducing logical consistency over time, the system ensures that current actions continue past intentions and serve future goals. This temporal integrity is a core element for achieving robust operation in high-level autonomous driving.

Time Synchronization and Deterministic Guarantees in System Architecture

At the underlying engineering level, temporal information manifests as precise control over data stream acquisition and processing frequencies. Autonomous driving systems integrate a variety of heterogeneous sensors; cameras typically sample at 30Hz (30 frames per second), while lidar might operate at 10Hz or 20Hz.

If the system cannot align data with different frequencies and generation times, severe cognitive biases arise. For instance, at a vehicle speed of 120 km/h, the vehicle travels approximately 0.033 meters per millisecond. If a 100-millisecond synchronization error exists between sensors, the system's calculated obstacle position would offset by 3.3 meters—unacceptable at high speeds.

To eliminate such offsets, hardware architectures incorporate a globally unified clock and timestamp mechanism. Each data packet receives a precise time label upon generation. The system uses the Precision Time Protocol (PTP) to ensure all processors and sensors operate in unison.

During multimodal fusion, algorithms retrieve the closest data pairs from a cached queue based on timestamps and employ motion compensation techniques to transform historical data into the current time coordinate system. This pursuit of "millisecond-level" temporal precision is a technical prerequisite for translating perception accuracy from theory to real-world vehicles.

At the software execution level, temporal information also involves latency management across the computational chain.

A complete autonomous driving closed loop (closed-loop) process includes sensor triggering, data transmission, neural network inference, decision generation, and actuator response. Each stage incurs some delay. If logical temporal disorder occurs during processing, the system loses effective vehicle control.

Therefore, current autonomous driving architectures adopt deterministic scheduling strategies to ensure critical tasks complete within fixed time windows. This metronome-like stable operation provides functional safety guarantees for the entire system, enabling it to handle various extreme and sudden situations.

What Is the Future Path for Temporal Information?

As technology evolves, the application of temporal information will transition from "short-term caching" to "long-term memory."

Currently, mainstream perception frameworks primarily use data from the past few seconds to enhance current recognition. Future directions involve constructing city-level long-term priors. This means that when a vehicle passes the same street a second time, it can recall previous observations. This ability to convert historical observations into knowledge reserves significantly reduces online perception difficulty and enhances system performance in complex environments.

In end-to-end (E2E) technical architectures, temporal processing becomes more integrated. The system no longer artificially separates perception and prediction; instead, it directly converts continuous image sequences into driving trajectories through a unified deep learning model.

In this mode, the model automatically learns temporal features. By introducing multi-step prediction and temporal feedback mechanisms, the E2E model simulates scene evolution under different future actions, enabling it to select the safest and most efficient path from countless possibilities.

Final Thoughts

Temporal information represents the essential path for autonomous driving systems to evolve from "mechanical reactions" to "intelligent understanding." It not only resolves occlusion and jitter issues at the perception level but also infuses predictive capabilities into prediction and planning while providing seamless synchronization guarantees at the hardware level.

Deep excavation and precise management of temporal information not only enhance autonomous driving safety but also endow machines with human-like driving wisdom and composure. In future technological iterations, efficiently storing, indexing, and utilizing vast amounts of temporal data will become the key proposition determining the intelligence ceiling of autonomous driving systems.

-- END --