Industry | Google's TurboQuant Algorithm Sparks Chain Reactions, New Tech Set to Revolutionize AI Memory Supply and Demand

![]() 04/07 2026

04/07 2026

![]() 331

331

Preface:

Recently, Google Research's official blog unveiled a technical deep dive into the TurboQuant compression algorithm.

What started as academic discourse quickly snowballed into a seismic shift across the global tech industry and capital markets within a mere 48 hours.

Memory chip stocks worldwide took a hit, with Micron Technology's shares dipping by 3%, Western Digital falling 4.7%, and SanDisk plummeting 5.7%.

From Computational Bottlenecks to Memory Bottlenecks: The KV Caching Dilemma

To grasp why TurboQuant caused such market upheaval, it's crucial to first understand a long-ignored performance bottleneck in large language model operations: Key-Value Cache (KV cache for short).

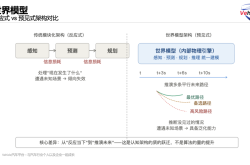

When users interact with AI large models, the model doesn't process all information at once. Instead, it generates responses token by token.

For each new token generated, the model must revisit all previously processed contextual information.

To avoid redundant computations on this historical data, the model stores intermediate calculation results in a temporary memory repository—this is the KV cache.

When users task AI with long-document analysis, complex code debugging, or multi-round deep conversations, KV cache memory usage grows linearly with context length.

This memory anxiety has become a core obstacle to the commercial deployment of AI large models. The issue isn't a lack of intelligence in the models but rather that limited runtime memory resources can't keep pace with their ambitions.

The Dilemma of Traditional Quantization: Solving One Problem, Creating Another

The industry hasn't been idle in tackling KV cache memory challenges.

Traditional high-dimensional vector quantization techniques store data using lower-precision data types instead of high-precision floating-point numbers, achieving storage space compression.

However, this seemingly ideal solution encounters an awkward situation in practical applications—solving one problem only to create another.

Traditional quantization requires calculating and storing additional quantization parameters for each tiny data block during compression—think of these as admission tickets and instruction manuals generated during the process.

These quantization parameters themselves constitute memory overhead, and this burden worsens as compression precision increases.

Consequently, a significant portion of memory savings from compression is consumed by these parameters, substantially reducing actual benefits.

Worse still, calibration datasets are needed for model adaptation, with severe cases requiring complete model retraining or fine-tuning.

Thus, while quantization techniques look promising on paper, truly zero-barrier, zero-loss solutions remain rare in commercial deployments.

TurboQuant's Technological Breakthrough: 6x Compression and 8x Speedup

Against this backdrop, Google Research's TurboQuant algorithm shines brightly.

The core innovation lies in its complete restructuring of vector quantization's underlying logic, achieving true lossless compression through two key technologies working in tandem.

① PolarQuant (Polar Coordinate Quantization): Traditional methods typically use Cartesian coordinates to describe high-dimensional vector data, resulting in scattered, disordered numerical distributions that hinder efficient compression.

PolarQuant takes a different approach by converting data from Cartesian to polar coordinates, leveraging polar coordinates' natural normalization properties to map data onto fixed circular grids with known boundaries.

This transformation organizes previously scattered numerical distributions into regular, concentrated patterns, fundamentally eliminating dependence on extra quantization parameters.

Without expensive admission tickets and instruction manuals, the data inherently possesses compression properties.

② QJL (Quantized Johnson-Lindenstrauss Transform): All compression processes inevitably introduce minor precision losses, and PolarQuant is no exception.

QJL acts as a mathematical error corrector, using just 1 bit of computational power to capture and eliminate residual deviations from the first stage.

This resembles quality inspectors in precision manufacturing who correct minor errors on production lines, ensuring final products—in this case, AI models' attention score calculations—maintain high precision.

TurboQuant's workflow can be understood as follows:

PolarQuant performs high-quality primary compression, preserving vectors' core concepts and features.

QJL handles residual minor errors, ensuring compressed calculation results match originals exactly.

Through this two-stage combination, TurboQuant achieves near-lossless compression at a total bit width of 3 bits.

The process requires no model retraining, no calibration datasets, and is extremely GPU-friendly—truly plug-and-play.

Google's research team conducted rigorous benchmark tests on two mainstream open-source large models, Gemma and Mistral, with exciting results.

TurboQuant directly compresses KV caches to just 3 bits per channel precision. Compared to traditional 16-bit or 32-bit floating-point storage, memory usage decreases by at least 6x (an 83% reduction).

On NVIDIA H100 GPUs, a 4-bit TurboQuant scheme achieved attention computation speeds 8x faster than unquantized 32-bit baseline versions.

Capital Markets' Rollercoaster: New Technology Reshapes Supply-Demand Dynamics

Market reactions to TurboQuant's release resembled an emotional rollercoaster.

On announcement day, U.S. memory chip stocks faced collective sell-offs, with major manufacturers like Micron, Western Digital, and SanDisk all declining.

Some analysts estimated that the entire memory sector lost approximately $620 billion in market value in a single day.

After calming down, analysts began more nuanced evaluations of TurboQuant's actual impact scope.

Morgan Stanley's research report noted clear limitations to TurboQuant's applicability: It primarily affects KV caching during inference stages without impacting model weight storage requirements or training processes.

This means the new technology's efficiency gains essentially improve hardware utilization per unit, enabling the same hardware to handle longer contexts or serve more concurrent users rather than eliminating memory demand entirely.

Some analysts referenced economics' famous Jevons Paradox as a framework: When resource usage efficiency improves, prices drop, and demand may actually increase.

If TurboQuant significantly reduces operational costs, it could unlock AI application scenarios previously too expensive to implement, driving memory demand in another dimension.

From the supply side, if this technology achieves widespread adoption, global AI industry demand growth for memory chips might temporarily slow.

However, from the demand side, the opposite could occur.

Lower inference costs make more application scenarios commercially viable.

Ultra-long document AI analysis, previously unfeasible due to costs, might now become accessible.

AI applications on edge devices and mobile terminals could also gain broader development space due to reduced memory usage.

This demand creation effect might ultimately drive memory consumption in another direction.

Additionally, if TurboQuant successfully migrates to vector retrieval domains, infrastructure costs in the search industry would see significant reductions.

Conclusion:

Once memory ceases being a rigid resource, the rules of the entire AI industry have quietly changed.

But TurboQuant proves that extreme algorithm optimization can similarly deliver revolutionary efficiency gains—even upending the hardware stacking paradigm.

Partial reference materials: Dianshou: "Memory Giants Suffer! Google's Black Technology Reduces AI Memory Demand by 6x," Ding Lingbo: "Explosive! Google's Latest Compression Algorithm Sparks AI Efficiency Revolution, Reducing Large Model KV Cache Memory Usage by 6x with Zero Precision Loss," SemiAnalysis: "Google's New Paper Devastates Memory Chip Sector," Cailian Press AI Daily: "Google's Breakthrough Algorithm Shocks Silicon Valley."