How to Create an Autonomous Driving Simulation Environment That Closely Mirrors Real-World Traffic?

![]() 04/07 2026

04/07 2026

![]() 424

424

The progression of autonomous driving technology heavily relies on the efficiency and thoroughness of its validation process. While logging miles on actual roads is crucial, depending solely on real-world testing is inadequate for ensuring safety verification, especially when confronted with extreme weather conditions, unforeseen incidents, and interactions with numerous traffic entities.

Hence, simulation testing has become pivotal, offering a controlled, safe, and efficient virtual environment. However, for the simulation system to transcend being a mere supplementary tool and become a dependable instrument capable of guiding real-world driving, the challenge of synchronizing the simulated environment with the real world must be tackled.

Digital Twin: Bridging the Gap Between Virtual and Physical Realms

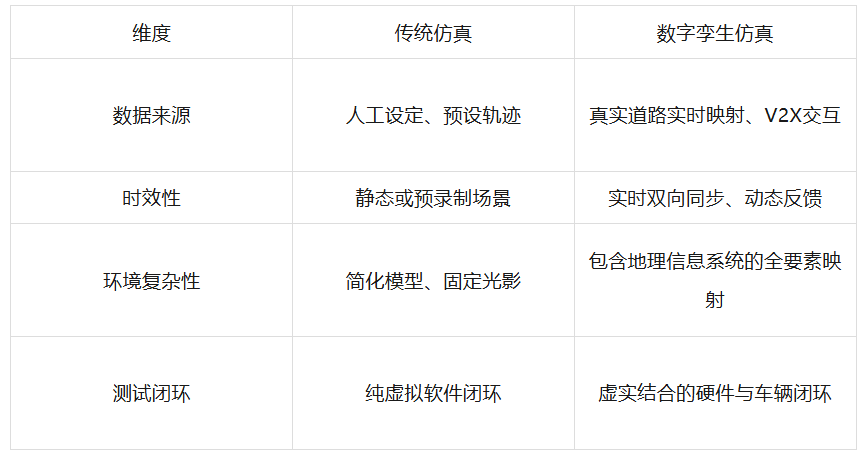

To create a simulation environment that mirrors real-world scenarios, the primary step is to establish a highly synchronized foundational setting. Digital twin technology is instrumental in this endeavor. It surpasses the creation of mere 3D models; instead, through real-time data exchange between physical entities and virtual models, it enables real-time monitoring, predictive optimization, and dynamic control of physical entities.

In the realm of autonomous driving simulation, the virtual environment must not only visually replicate the real world but also maintain logical and data flow consistency with reality. Digital twin technology enables technicians to virtually represent the entire lifecycle of vehicle systems, making it highly apt for autonomous driving testing.

The implementation of this technology hinges on a multi-layered architecture encompassing field testing layers, network transmission layers, and experimental testing layers, among others.

At the field testing layer, the vehicle under scrutiny operates in a real-world testing environment, with its onboard sensors transmitting real-time data on location, speed, and surrounding conditions via the network. Concurrently, the cloud database selects or generates corresponding virtual scenarios based on this real-time data.

This integrated virtual-real framework empowers developers to conduct realistic tests of connected autonomous vehicles in intricate virtual road scenarios within a confined space using mapping techniques.

The digital twin system can furnish foundational spatial geographic information services, such as precise locations of buildings, road markings, and urban features, while also supporting real-time trajectory tracking and historical trajectory management of moving objects.

To further augment realism, the incorporation of V2X technology is vital. V2X not only provides non-line-of-sight perception information but also acts as a conduit for simulation data transmission. Through this wireless communication method, sensor data can be uploaded, while information about virtual scenarios can be accurately relayed to test vehicles.

Characteristics of Multi-Dimensional Simulations

When constructing a digital twin model, dynamic modeling is paramount, necessitating the model to accurately reflect the dynamic attributes of physical entities in both temporal and spatial dimensions. When a vehicle on a real road turns or accelerates, the virtual counterpart must respond with millisecond-level latency and compute in real-time the alterations in lighting, perspective, and occlusion relationships caused by these movements.

This high-fidelity scene construction ensures that every meter traversed by the autonomous driving system in simulation holds substantial real-world relevance.

Risk Reconstruction: Deriving Survival Rules from Accident Records

Environment construction is merely the initial step; the essence of the simulation world lies in whether the traffic events it generates are representative. The most formidable aspect of real-world driving is managing extreme scenarios, known as "edge cases," such as sudden accidents, jaywalking pedestrians, or combinations of severely adverse weather conditions.

To align simulations more closely with real-world traffic scenarios, a data-driven approach has been explored, involving the extraction of risk elements from real traffic accident cases and their logical generalization.

This method leverages authoritative data sources, such as in-depth vehicle accident investigation systems, dissecting each real collision or near-miss incident into specific risk elements. These elements can be categorized into continuous types (e.g., vehicle speed, collision time, distance) and discrete types (e.g., weather type, road grade, obstacle type).

By statistically analyzing the distribution of these risk elements, technicians can pinpoint the root causes of accidents. Subsequently, employing specific combinatorial testing tools, the system can reconstruct these risk elements to generate a plethora of logical scenario cases.

For instance, in a highway lane-changing scenario, relying solely on human experience might only permit the design of a few common cut-in angles. However, through a data-driven approach, hundreds of variant scenarios can be derived from accident case data, encompassing different road surface friction coefficients, aggressive cut-in behaviors by following vehicles, and varying degrees of visual obstruction.

The logical scenarios generated through this combinatorial method can enhance the coverage of scenarios involving the interplay of multiple factors, achieving a balance between discriminative power and coverage in scenario generation.

This transformation from real accidents to logical scenarios and then to simulation cases essentially equips the autonomous driving system with an "examination question bank." Compared to aimlessly navigating virtual roads, this approach ensures that the risks encountered by vehicles in simulation are grounded in real-world lessons.

This not only expedites the testing process but also ensures that the system has validated handling capabilities when confronted with rare yet perilous dangers.

Physical Perception: Simulating the Precise World of Wave-Particle Interactions

It is insufficient for a simulation system to merely visually deceive the human eye. The "eyes" of an autonomous driving system are sensors such as LiDAR, millimeter-wave radar, and high-definition cameras.

These sensors perceive the world in accordance with stringent physical laws. To align simulations more closely with real-world scenarios, it is imperative to simulate the interaction processes between electromagnetic waves, photons, and environmental objects at the physical level.

The operational principle of LiDAR involves the emission, scattering, and echo reception of laser pulses. When laser light encounters rain, fog, or snowflakes, its performance significantly deteriorates. This is because raindrops are typically much larger in diameter than the wavelength of the laser, causing severe scattering of the light beam, with energy being absorbed or deflected, resulting in weak and chaotic echo signals.

In high-fidelity simulations, it is necessary to simulate signal attenuation caused by medium thermal fluctuations or rainfall, generating three-dimensional point clouds with realistic noise.

Through deep learning networks such as Pointnet++, the simulator can extract local features of each point and generate detection frames that closely resemble reality based on predicted foreground features, even performing fine positional corrections in the bird's-eye view (BEV) space.

Millimeter-wave radar, on the other hand, possesses distinct physical characteristics. It emits electromagnetic waves with longer wavelengths that can penetrate tiny water droplets in rain and fog but produce multipath reflections when encountering metallic objects or complex urban structures. The simulation system must accurately simulate the reflection coefficients of these electromagnetic waves on different material surfaces.

In physical simulation, objects cannot be simply categorized as "visible" or "invisible." The system must compute the signal-to-noise ratio of each pixel point or each point cloud signal under specific environmental conditions.

For instance, under direct strong sunlight, cameras may produce overexposure, which is precisely the type of defect that must be replicated in simulations. Through such foundational physical modeling, the perception algorithms of autonomous driving systems can learn to extract key information even under imperfect signal conditions, thereby achieving higher fault tolerance in reality.

Behavioral Evolution: Infusing the Virtual World with a Social Essence

Real-world traffic scenarios are social processes replete with interaction and psychological expectations. Pedestrians assess whether to cross the road based on a vehicle's speed, while drivers detect the lane-changing intentions of the vehicle ahead through subtle swaying. To enhance simulation realism, the behavioral models of traffic participants must evolve from simplistic "uniform linear motion" to human-like behaviors with multimodal characteristics.

Currently, the industry has commenced leveraging large-scale behavioral datasets to train simulated traffic participants. These datasets encompass not only ordinary vehicles but also vulnerable road users such as pedestrians, cyclists, and scooter users, as well as personalized behaviors of special roles like police officers and construction workers.

Traditional regression models tend to perform rigidly when confronted with diverse simulation scenarios, whereas emerging diffusion models exhibit significant potential in capturing multimodal characteristics. This implies that, under the same traffic situation, virtual traffic participants can generate multiple reasonable actions based on data distribution, with their human-like qualities continually improving as data accumulates.

Furthermore, through reward-driven reinforcement learning, virtual drivers and pedestrians can undergo efficient training in simulations. In this context, preset trajectories are no longer necessary; instead, they learn to complete passage tasks through collaboration or competition based on environmental feedback. This profound behavioral modeling ensures that the autonomous driving system no longer encounters rigidly rule-abiding robots but rather "living" entities with unpredictability and social acumen.

Final Thoughts

Constructing an autonomous driving simulation that closely mirrors real-world traffic scenarios is a comprehensive systems engineering endeavor. It necessitates the creation of a spatiotemporal framework that seamlessly blends virtual and real elements through digital twin technology, the infusion of challenging logical content using real accident data, the recreation of the raw world perceived by sensors with the aid of precise physical models, and the endowment of virtual agents with behavioral logic through advanced AI algorithms.

Only when the simulation system closely approximates reality in the four dimensions of environment, events, perception, and interaction can each line of the test report it generates serve as a passport for autonomous vehicles to venture onto real roads. In this process, technology no longer merely pursues visual aesthetics but rather seeks the deepest-level restoration and respect for real-world laws.

-- END --