Rising Against the Trend: Where Does Zhipu's Zhang Peng Draw His Confidence?

![]() 04/10 2026

04/10 2026

![]() 513

513

On April 8, Zhipu implemented its third price hike of the year.

This follows a 30% increase in code subscription packages in February and a 20% rise in flagship API pricing in March. Yesterday, OpenRouter data revealed another 10% API price hike for the GLM series, coinciding with the launch of its flagship open-source model GLM-5.1. Post-adjustment, its programming scenario cache hit token pricing nears Claude Sonnet 4.6 levels. By market close, Zhipu's stock surged 11.49% to HK$868, valuing the company at HK$387.2 billion.

Meanwhile, API annualized revenue (ARR) soared 60x to RMB 1.7 billion over 12 months, while token usage jumped 400% despite cumulative 83% price increases. CEO Zhang Peng succinctly stated: "The bottleneck is compute power, not customer demand."

In a Chinese large model industry still waging price wars for market share, Zhipu's contrarian strategy is underpinned by a rare transparency in its inaugural financial report, distilled into an equation: AGI Commercial Value = Intelligence Ceiling × Token Consumption Scale.

For Zhipu, this represents a complete strategic operating system dictating R&D priorities, pricing strategies, and trade-offs between market share and profitability. Globally, OpenAI frames narratives in safety reports, Anthropic builds its brand through "Responsible Scaling," and Google embeds AI economics deep within group financials. In this context, Zhipu stands out as one of the few AI companies worldwide dare to (boldly) quantifying its entire commercial logic.

The "Intelligence Ceiling" represents Zhipu's controllable variable. GLM-5.1, released April 8, became the first model to surpass Claude Opus 4.6 on SWE-bench Pro—a benchmark closely resembling real-world software development. More notably, its "long-duration task" capability enables 8-hour continuous autonomous operation, including planning, execution, testing, strategy shifting when stuck, and self-repair after errors, ultimately delivering engineering-grade results.

"Token Consumption Scale" is the dependent variable Zhipu expects to amplify with rising intelligence ceilings. The 2026 explosion of Agentic toolchains like OpenClaw validated this logic's short-term elasticity: complex tasks involve hundreds of tool calls and thousands of internal reasoning steps, consuming tokens at 10-100x ordinary conversation rates, driving exponential (not linear) growth in total calls.

On paper, this logic appears self-contained. But in business, equation elegance never equals victory. Zhipu must answer: In an API market with near-zero switching costs, can model capability gaps alone establish true pricing power? Especially when technological leadership windows inevitably shrink, how will the company sustain its ambitious growth expectations?

No "Die-Hard Fans" for Large Models

The correct starting point for understanding Zhipu's pricing power claims lies not in its model strength but in market structure—which fundamentally rejects traditional pricing power.

Oracle maintains decades of database premiums through migration fears after millions of businesses embed its logic deeply into client systems, where switching suppliers entails multi-year engineering cycles and hundreds of millions in integration risks. Such pricing power stems from accumulation of irreversible switching costs over time.

API economics demolishes this moat. Switching large model API providers typically requires modifying interface endpoints and adjusting parameters—tasks engineers can complete in a single afternoon. This means the only sustainable source of pricing power in large model API markets is model capability gaps themselves. This forms the bedrock assumption of Zhipu's equation—and its most vulnerable logical point. From 90% maximum price war discounts to 83% cumulative API price hikes, Zhipu executed a market-confounding pricing reversal within a single fiscal year. A historical parallel exists: After the 2001 dot-com bubble burst, Salesforce was among few SaaS companies maintaining subscription pricing while competitors slashed rates. Ultimately, its customer retention and net revenue retention (NRR) rates outperformed those who compromised.

The core insight: Price anchors user expectations of product value. Premature discounting destroys this anchor, permanently empowering clients in renewal negotiations. Zhipu's reverse price hikes follow similar execution logic.

But this analogy has a critical boundary. Salesforce's pricing power ultimately derives from CRM data stickiness: sales histories, client relationships, and opportunity records accumulate within the platform over time, increasing—not decreasing—migration costs. API markets exhibit the opposite structure: higher technical standardization drives switching costs toward zero.

Thus, in the large model world, technological moats often expire faster than startups can execute strategic plans. Zhipu's current pricing power resembles more a time-limited pass than a structural moat.

Take GLM-5.1: Its SWE-bench Pro superiority over Claude Opus 4.6 marks China's entry into the global top tier for open-source model capabilities. Yet historical data shows such leadership windows continuously shrinking. When GPT-4 launched in March 2023, its capability gap seemed insurmountable. By mid-2024, open-source models like Llama 3 and Mistral nearly matched its performance across most benchmarks—a compression period under 18 months. Given China's open-source ecosystem iteration density, compression will only accelerate. DeepSeek, Qwen, and now GLM-5.1 itself exemplify this trend. This implies any vendor's pricing power built on benchmark leadership may have quarterly—not annual—validity.

What priorities guide enterprise clients when purchasing API services? Zhipu's revenue structure offers a counterintuitive answer. In 2025, its localization deployment (enterprise private deployment) generated RMB 534 million, accounting for 73.7% of total revenue—still the dominant income source.

Substantial client stickiness derives from integration costs and operational dependencies created by private deployments, rather than pure API capability gaps. This is particularly true for its long-standing government and enterprise relationships built through localization services—a classic switching cost logic, not the "intelligence ceiling" logic assumed in its formula.

However, Zhipu's gamble rests on a solid fulcrum: Rising task complexity may fundamentally alter API market pricing economics, transforming "capability gaps" from substitutable to irreplaceable.

GLM-5.1's "long-duration task" capability—8-hour continuous autonomous operation—represents a qualitative, not quantitative, difference from minute-level chat models. In long-duration scenarios, gaps in contextual consistency, self-correction, and local optimum escape amplify over time. A model with minor short-conversation differences may produce drastically divergent results over 8-hour continuous execution.

This suggests that as Agentic applications scale, the functional gap between "good enough" and "best" may shift from imperceptible to unacceptable, creating genuine switching costs for capability gaps for the first time.

But this logic requires Agentic scenarios to rapidly become mainstream commercial battlegrounds—the core bet underlying Zhipu's equation.

The equation itself cannot prove this premise; it merely offers internally consistent strategic inferences under that assumption.

Who Captures the Token Traffic Bonus?

The second term in Zhipu's equation is never a solo battlefield. In this war, Zhipu's position proves far more complex than its benchmark rankings suggest.

In early 2026, China's large model industry witnessed a discontinuous surge in token usage. OpenRouter data shows weekly Chinese large model calls reaching 4.69 trillion tokens in early March, surpassing U.S. volumes for two consecutive weeks, with global top five rankings dominated by domestic models. Driving this surge was mass adoption of Agentic programming toolchains like OpenClaw. The fundamental difference between Agent applications and traditional chat lies in token consumption logic: complex tasks involve hundreds of tool calls and thousands of reasoning steps, consuming 10-100x ordinary conversation volumes per task. Total calls thus shifted from user-scale linear growth to task-complexity-driven exponential expansion. For model vendors, this represents both revenue explosion and soaring compute costs.

This demand shock rewrote industry supply-demand equations. Within one week in March 2026, Tencent Cloud, Alibaba Cloud, and Baidu Intelligent Cloud all announced price hikes. Tencent Cloud's Hunyuan series saw some models surge 463%, with multiple previously free beta models transitioning to commercial billing.

Tencent management precisely described the mechanism during earnings calls: Infrastructure capacity had been fully booked, forcing ultra-large-scale providers operating on thin margins to raise prices when demand rebounded. Structural supply-demand imbalances gave all players room to hike prices, providing cover for Zhipu's proactive pricing decisions to appear more logical within the industry context.

But its financials revealed another industry challenge: Zhipu's comprehensive gross margin compressed from 56.3% in 2024 to 41% in 2025. Without self-built (self-built) infrastructure's scale effects, its asset-light model saw compute costs rise linearly with token usage. Price hikes thus became not just expressions of pricing confidence but necessary actions to maintain commercial logic coherence.

This dilemma plagues all independent Chinese large model companies. Leiphone reported that a leading independent vendor last year deferred RMB 100+ million in training fees to a southern Chinese cloud provider, only repaying debts gradually after commercialization finally succeeded. Not until this year, with Agent and multimodal applications taking root in real production scenarios and independent vendors leveraging overseas markets and B-end implementations, did they begin breathing easier.

Understanding this underlying resource anxiety clarifies why Zhipu and same period (contemporarily listed) MiniMax pursued textbook-level strategic divergences. Both companies started from similar positions: unit economics were profitable, but business operations remained insolvent, with competitive pressures dictating spending rhythms.

MiniMax chose open-source distribution and global consumer reach. It topped OpenRouter rankings for five consecutive weeks, with monthly calls reaching 6.9 trillion tokens—2.6x Zhipu's 2.7 trillion. Latest financials show its R&D growth-revenue growth gap narrowing for the first time, indicating platform scale effects taking hold. This follows the classic platform logic: trading low marginal costs for market coverage, betting on scale triggering network externalities.

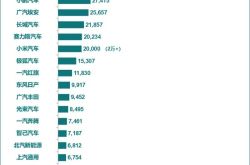

Zhipu opted for a premium strategy, raising prices in the domestic enterprise market while locking token consumption into high-complexity, high-reliability productivity scenarios, prioritizing high ARPU over maximum call volume. Enterprise-grade validation appears evident short-term: Nine of China's top ten internet companies now deeply integrate GLM, including ByteDance, Alibaba, and Tencent, positioning Zhipu as infrastructure for large firms' intelligent transformation.

Historically, both extreme scale for network effects and extreme capability for B-end premiums have built billion-dollar businesses. The cruelty lies in how, during the same AGI dawn and on the same train, these two strategies cannot both prevail. The dividing line has emerged.

On OpenClaw's call volume rankings, GLM-5 briefly topped charts but more often ceded leadership to Kimi, MiniMax, MiMo, and Qwen. Kimi K2.5 became OpenClaw's official free main force (main) model due to cost-effectiveness, rapidly accumulating calls; MiniMax staged a strong reversal through multimodal and base model optimizations. Long-duration tasks requiring GLM-5.1's "8-hour engineering capabilities" represent a tiny fraction. The fundamental reason: Agent toolchain call distributions follow power-law structures similar to internet traffic, with most calls originating from lightweight, high-frequency daily automation requests, while engineering-grade long-duration tasks comprise a negligible share. This precisely aligns with Zhipu's weak scenarios, where high pricing creates structural resistance in scale competition, and premium-positioned user groups have far lower call volume ceilings than mass-market tools' daily active user scales.

The true AGI wave feast belongs to vendors willing to sacrifice margins in price competition to exchange model calls for ecological coverage. Zhipu's premium logic means systematically missing this incremental bonus driven by universal Agent adoption. This represents natural market selection and the inevitable outcome written into Zhipu's equation.

Epilogue

The core proposition underlying Zhipu's equation ultimately returns to the physical laws of technological competition. Tang Jie's statement at the AGI Frontiers Summit bears full quotation: "Large models now compete primarily on speed. Maybe if our code is correct, we'll advance further—but failure could erase six months of progress."

In the large model arena, capability iteration speeds now systematically outpace the solidification of competitive advantages. GLM-5.1's current SWE-bench Pro achievement represents a genuine technological milestone; six months later, it will become a historical footnote rather than a sustained competitive moat. This law confronts all participants equally, regardless of company background or structure.

Zhipu AI's inaugural annual report opens with an equation and closes with a series of open questions: Can a company with annual revenue covering just three months of R&D expenses convert capability premiums into structural moats before technological windows close? Can it transform supply-demand scarcity into long-term client stickiness before compute bottlenecks resolve? Can it complete the leap from "strongest model" to "irreplaceable system" before the next industry price war erupts?

Their answers will depend on which term in the equation changes first: whether the leading window of the 'intelligence ceiling' closes prematurely in the open-source competition, or whether the incremental growth of 'Token consumption scale' in high-level scenarios ultimately fails to surpass the scale ceiling of a premium positioning.

*The featured image and illustrations in the text are sourced from the internet.