Three Truths About the Agent Economy When AI Takes Over Decision-Making Power

![]() 02/27 2026

02/27 2026

![]() 445

445

Recently, several partners at Y Combinator recorded a podcast discussing a fascinating phenomenon:

With the rise of OpenClaw, a 'parallel economic system' belonging to Agents is taking shape.

This is not just about efficiency gains—it’s about a change in the actor itself.

In the past, software was merely a tool, and humans were the decision-makers. Whether selecting suppliers, ordering services, or building tech stacks, humans always made the final call.

Now, more and more ordinary users—even those without technical backgrounds—are treating AI Agents as 'avatars,' letting them search, compare, filter, negotiate, and even directly complete subscriptions and deployments.

Agents are no longer just executing instructions; they’re making judgments on behalf of humans within certain boundaries. As this trend expands, structural changes begin to emerge.

First, new 'buyers' have emerged in the software market: the countless agents running in the background. This constitutes an 'Agent Economy' that runs parallel to the human economy.

Second, the focus of infrastructure is shifting. Agents need identities, permissions, and interfaces. Email, account systems, and payment capabilities are transitioning from 'for humans' to 'for agents.' The infrastructure layer around agents is being rebuilt.

Third, interaction models are evolving. When large numbers of Agents begin interacting with one another, they form online communities where agents are the primary actors. They collaborate, exchange information, and even leave transaction records. Behavior no longer revolves entirely around humans.

These changes may be what truly matters in the Agent economy.

/ 01 / Transfer of Decision-Making Power: 'Applications' No Longer Exist

If we rewind a year, the mainstream experience of developer tools was still about 'more advanced auto-completion,' like the debate between Cursor and Windsurf.

Essentially, they were all about improving coding efficiency, but humans still needed to control every key step.

The change brought by Claude Code is that decision-making power is beginning to shift.

A typical scenario: someone runs four or five agent windows simultaneously every night, switching back and forth, but no longer reviews line by line or micromanages.

Humans are setting goals more than reviewing line by line. Agents are more like colleagues working in parallel, not just tools.

Once this experience takes hold, the impact isn’t limited to engineer efficiency. It spreads outward. Some non-technical CEOs are also using OpenClaw to directly automate entire business processes.

Peter Steinberger once made a key judgment: AI doesn’t just answer questions—it’s beginning to truly 'manipulate the environment.' When models can read files, write code, call APIs, and run command lines, they’re no longer just assistants but task-executing entities.

This doesn’t just mean a lower barrier to development—more importantly, 'how software is made' is changing.

In Peter’s view, AI itself is an entity that can continuously solve problems. Under this structure, 'applications' become less important. The value of many apps is essentially just managing data, reminding you, and recording behavior—functions that can all be absorbed by the agent layer.

Take fitness apps, for example. In the past, you needed a standalone app to record workouts, remind you to check in, and generate plans. Now, agents can understand your goals, automatically track data, and even adjust training plans based on results.

For most people, the key isn’t 'which app to use' but 'whether the goal is achieved.' When goals become central, apps fade into the background.

Thus, products that merely manage data and process reminders are at the greatest risk. What’s harder to replace are products with hardware, sensors, and offline touchpoints—they connect directly to the real world, not just the data layer.

When the application layer is compressed and models become homogeneous, what’s left as a moat?

The answer shifts to 'personal data.'

A key advantage of OpenClaw is its emphasis on data localization and the long-term accumulation of personal memory. Through localized data, it builds a system of continuously accumulating personal memory—your historical behavior, preferences, and decision-making styles are all precipitate (can be translated as 'recorded' or 'captured' in this context) down.

Peter also mentioned the concept of a 'soul file.' We can think of it as a set of core values and behavioral principles—defining how this AI interacts with you, how it prioritizes in conflicts, and what it prioritizes when faced with choices.

It’s the agent’s 'personality setting' and 'principle framework,' determining tone, style, and even decision-making logic.

When apps become lighter and models converge, personal memory and value frameworks may become the new core assets.

/ 02 / Feeding Information to AI Agents: The First Golden Track of the Agent Economy

As the Agent economy rises, a fundamental change occurs: tools are no longer chosen only by humans—agents are becoming new 'decision-makers.'

How were developer tools chosen in the past? Mainly through human networks—word-of-mouth in developer communities, GitHub trending lists, tech blog recommendations, and offline conference exposure.

These mechanisms still exist today, but they’re being supplemented by a new distribution path: default recommendations by agents.

More and more development decisions aren’t made by a CTO or engineer comparing options one by one—instead, agents automatically select tools, services, and interfaces in the background based on context. Who gets called by default is more likely to become the 'standard stack.'

You could even think of it this way: a new 'buyer group' has emerged in the software market—the countless agents running in the background. This constitutes an 'Agent Economy' that runs parallel to the human economy.

Agents make decisions, select tools, and choose service providers on behalf of humans. Their choices directly influence order flow and ecosystem dynamics.

But interestingly, agents aren’t naturally 'optimal decision-makers.' They’re also influenced by information structure.

For example, Claude Code sometimes defaults to older versions of tools (like Whisper v1) instead of faster, cheaper alternatives. The reason isn’t necessarily a lack of capability—it might just be that the older tool’s documentation is easier to parse, more structured, and has more complete examples.

This reveals two signals:

First, agents’ 'selection mechanisms' are still in their early stages and haven’t been optimized to the extreme.

Second, this is precisely an opportunity for entrepreneurs. If agents judge based on documentation structure, interface clarity, and example completeness, then product design must upgrade from 'human-friendly' to 'agent-friendly.' Whoever is easier for agents to read and call is more likely to become the default answer.

The first beneficiaries may be companies that can provide clear information to agents.

A clear signal is that documentation is the first scene to change.

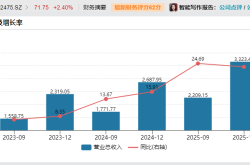

Over the past 12 months, the number of newly created databases (like Postgres) has grown significantly. On one hand, it’s because more people are building apps; on the other hand, agents are automatically selecting tech stacks in the background, driving synchronized growth in demand for databases, hosting, and development foundations.

Take Supabase as an example. One reason it’s more likely to become the default choice is that its documentation is clear, structured, and has directly executable examples. For agents, 'ease of parsing and calling' often matters more than brand awareness.

Resend is another typical case. The founder of Resend, a Y Combinator Winter 2023 batch company, discovered that when users asked ChatGPT or Claude 'how to send emails in a web app,' the model often defaulted to recommending Resend.

He further found that ChatGPT became one of the top three channels for customer conversion. After realizing this, the team actively optimized their documentation to make it more 'Agent-friendly.'

The so-called 'agent-friendly documentation' means Resend organized many questions that humans or agents might ask in a question-and-answer format and provided highly structured, bullet-point answers.

Not only that, but every example actually includes code snippets that agents can directly parse and are clearly structured.

Besides Resend, Minify is another noticeable case.

Minify originally made better API documentation tools—in the past, a 'developer experience plus.' Now, it may become a 'must-have' for development tool companies because documentation needs to be optimized not just for humans but for agent parsing and calling.

Given the 'exponential growth in agent decision-making,' a 5% improvement in documentation parsability could lead to a magnitude difference in distribution.

/ 03 / Reducing Friction Costs: Agent Infrastructure Begins to Rise

When agents start doing things on behalf of humans, a new infrastructure need emerges: agents need independent identities and permissions.

Today, a wave of startups dedicated to serving AI agents has emerged.

For example, Agent Mail is a company that specifically creates inboxes for AI agents.

Traditional email (like Gmail) is designed for humans. To prevent spam and bot abuse, it intentionally raises barriers to automation: risk control checks, captchas, and access frequency limits stack up.

These safety mechanisms in human eyes become friction costs for agents.

If AI agents are truly to complete registration, communication, verification, and transaction processes on behalf of humans, they need an email interface that 'won’t get banned for automation.'

And Agent Mail provides identity infrastructure for agents. As agents become new economic participants, the identity layer must be rebuilt—and email happens to be the first brick in this system.

Similar issues extend to: agent phone numbers (akin to 'Twilio for agents'), agent account systems, permission systems, payment systems, and agents’ interfaces with the real world: booking restaurants, making phone calls, or even hiring humans to wait in line offline.

In other words, the core of Agent economy infrastructure is continuously reducing friction costs.

This logic is also reflected in OpenClaw’s technical roadmap. To many, MCP might be 'the standard interface for the agent era.'

But Peter Steinberger prefers another path: letting agents directly use humanity’s existing toolchains instead of reinventing a whole set of agent-specific protocols.

In his view, many so-called 'new interfaces designed for agents' essentially add abstraction layers and complexity.

Instead of constructing a ritualized agent protocol, it’s better to let agents directly enter the existing ecosystem—using CLI, calling Unix tools, reading and writing files, and running scripts. Unix itself is a system designed for composability, and agents, as programs, can naturally fit in.

While agents are still evolving rapidly, reducing abstraction layers and artificial constraints means faster feedback loops. If agents can directly call the CLI, they can combine millions of tools in the existing world without waiting for 'agent-specific interfaces' to become widespread.

In other words, the growth speed of the Agent economy often depends on whether friction is low enough.

/ 04 / Swarm Intelligence: Replacing Superintelligence

Today, we can already see some prototypes of the Agent economy: agents replying to each other, collaborating to complete tasks, and even leaving real 'transaction records.' In a sense, it resembles an early social network more than a polished product.

This also raises a broader discussion: What form will AI take in the future?

In the past few years, the dominant narrative leaned toward 'centralized superintelligence'—a single model with ever-larger parameters, ever-more concentrated computing power, and ever-stacking capabilities. As if scale alone could approach 'God’s-eye view' intelligence.

But now, another path is emerging: swarm intelligence.

Human civilization advances not because of an all-powerful individual but because of a highly specialized and collaborative network. No single person can independently build an iPhone, land on the moon, or sustain modern society.

What truly generates productivity is specialized division of labor, collaboration mechanisms, and the recording and accumulation of knowledge.

If we map this logic to the AI world, the future form of intelligence may not be a supermodel but a network of collaborating intelligent agents.

Multiple relatively inexpensive models, each taking on different roles, collaborate through interfaces to complete complex tasks. They form divisions of labor, shared memory, and task coordination, operating like a society.

From this perspective, 'agent communities' like Multbook may resemble the prehistoric stage of civilization—chaotic, unstable, but crucially: interactions are being recorded, and collaboration is being accumulated. A history between agents is forming.

This path is clearly different from the past few years’ pursuit of 'centralized superintelligence.' It emphasizes organizational methods over monolithic capability.

Even if AI is general intelligence, it can still be organized into 'specialized intelligent swarms.' Just like human society, through division of labor and collaboration, it can produce capabilities far exceeding any single individual’s limits.

What’s truly exciting isn’t just that models are getting stronger—it’s that intelligence is beginning to operate as a network.