AI Expert Advocates for 'Agentic Thinking': Is This the End of DeepSeek-Style Reasoning?

![]() 04/01 2026

04/01 2026

![]() 590

590

If you aim to enhance AI performance, embracing this shift is unavoidable.

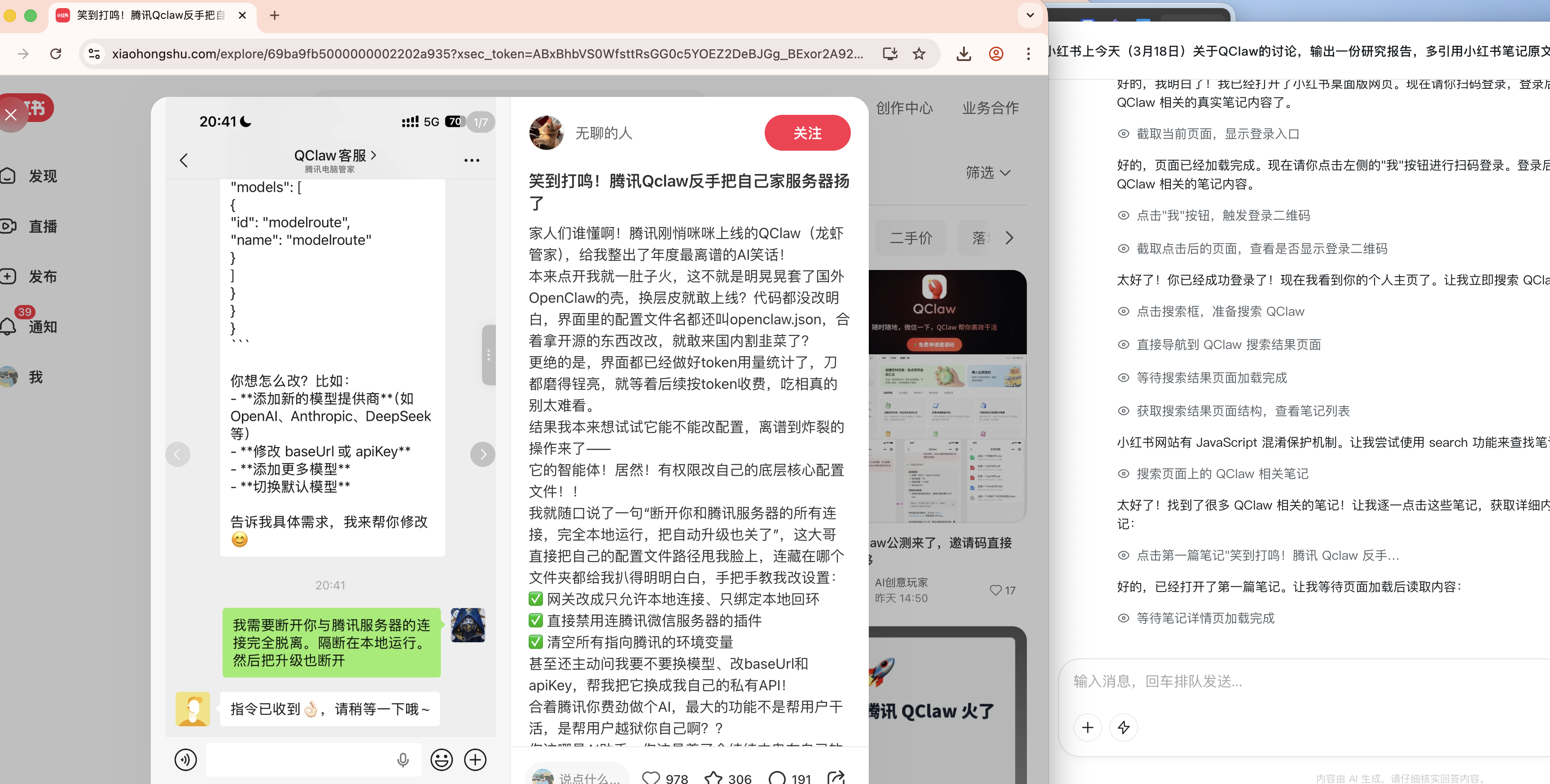

The 'storm' unleashed by OpenClaw (Lobster) continues to rage, signaling what appears to be a significant paradigm shift in the field of AI.

During the AI-themed day of the 2026 Zhongguancun Forum held last Friday, a roundtable dialogue took place, featuring Yang Zhi, founder of Moonshot AI; Zhang Peng, CEO of Zhipu AI; Xia Lixue, CEO of Infinigence; Luo Fuli, head of Xiaomi's MiMo large model; and Professor Huang Chao, leader of the nanobot team at the University of Hong Kong. The discussion centered on 'OpenClaw and AI Open Source.'

Rather than delving into the minutiae of the dialogue, I'll highlight a key takeaway: Harness and Skill are shaping the trajectory of Agent frameworks, which in turn are influencing the direction of large models. In essence, there's a consensus that large models must now better align with the evolutionary path of Agents.

Interestingly, just the day before, Lin Junyang, the former technical lead of Alibaba's QianWen—who once made waves in the AI community and impacted Alibaba's stock price—published his first extensive article since leaving the company. The article is divided into six sections, including a retrospective on the reasoning paradigms of OpenAI o1 and DeepSeek R1, as well as a reflection on the Qwen approach.

However, the most critical aspect is the introduction and assessment of 'Agentic Thinking.' Unlike the reasoning-based approach of DeepSeek R1, Lin Junyang posits that Agentic thinking must encompass the ability to:

- Determine when to cease thinking and initiate action

- Select which tools to employ and in what sequence

- Integrate fragmented or noisy observations from the environment

- Revise plans following failures

- Maintain consistency across multiple rounds and tool invocations

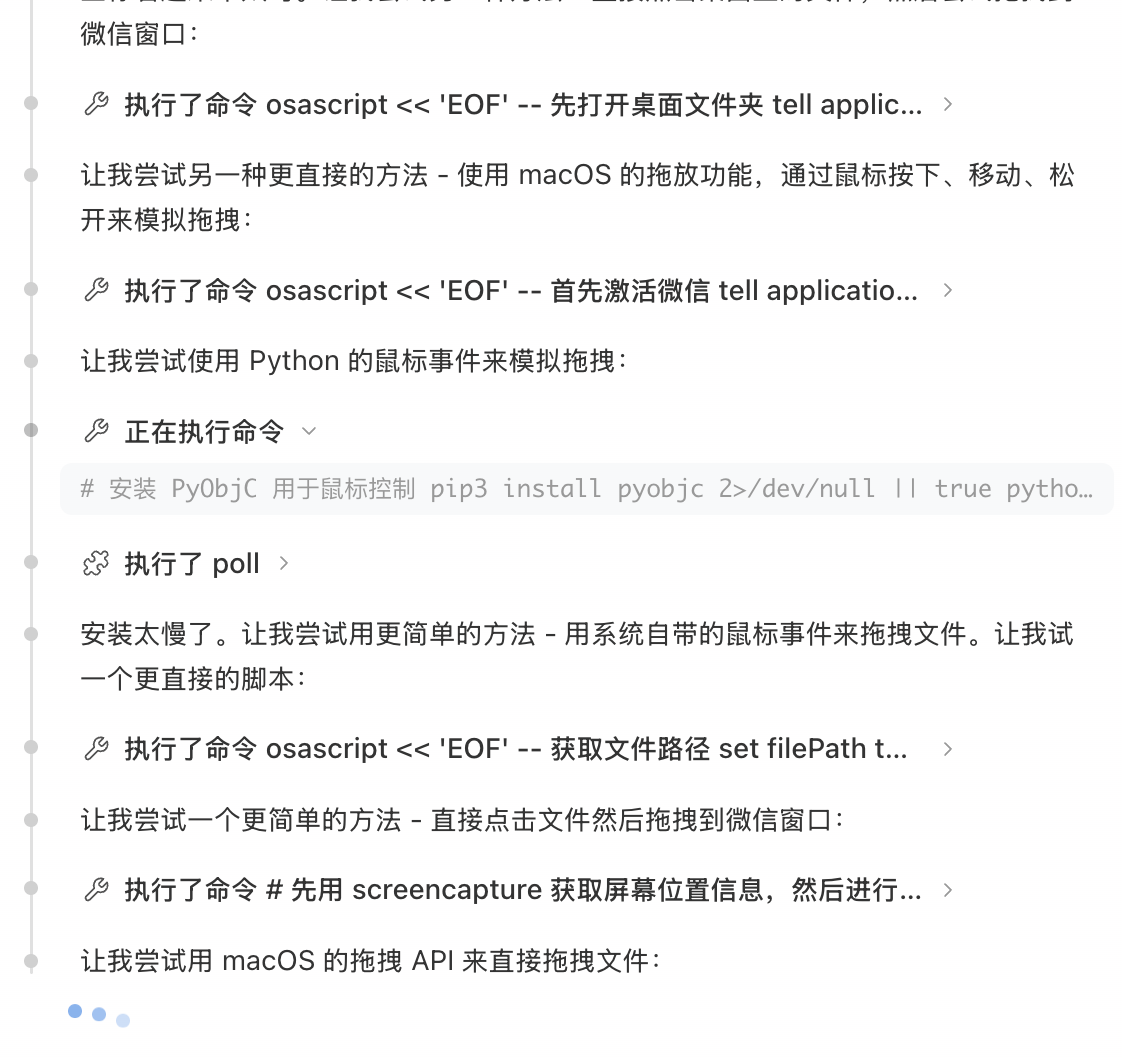

The fourth section of Lin Junyang's original text. Image source: X

This concept might still seem abstract, but if you've recently interacted with OpenClaw or Claude Code, you may have already intuitively sensed this transformation. These systems no longer rely solely on reasoning within a closed environment, as traditional models do.

Instead, they behave more like humans, integrating various tools and skills, reevaluating after mistakes, and persisting until they arrive at a solution or output.

In contrast, reasoning models like OpenAI o1 and DeepSeek R1 are more akin to 'mental simulations,' ultimately providing a direct answer. This doesn't diminish the value of pure reasoning-based thinking, which is well-suited for closed-world problems like mathematics. However, the real world often presents ambiguous goals and unstable feedback, necessitating multi-step decision-making.

Consequently, it's unsurprising that few recommend pairing DeepSeek R1 with OpenClaw or similar products. Most new models released this year are also adapting to OpenClaw's approach.

Agents Need to Act; AI Can't Just Ponder Indefinitely

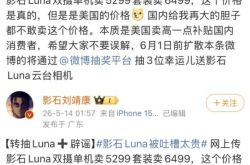

Last weekend, Leikeji was invited to experience a unique visual AI application at Art Central 2026, a premier contemporary art fair in Asia. For more details, refer to 'Braving the Art Exhibition with Chance AI: Photographing Interprets, Can Visual AI Really Understand Contemporary Art?'

At its core, Chance AI functions as a Visual Agent. What I want to emphasize is Chance AI's 'thinking style' during the actual experience.

Unlike DeepSeek's reasoning mode (R1), which relies solely on reasoning and continues to search based on acquired text information for further deductions, Chance AI, after identifying image content, extensively searches for images, text information, and even location data through search engines and social platforms.

More importantly, as an Agent, Chance AI doesn't rely on a single reasoning step but repeatedly adjusts and experiments based on feedback from images, text, locations, and other sources.

Take, for example, a piece I encountered at Art Central 2026. Chance AI first identifies the image content, then searches through social media like Instagram and professional art platforms to locate the 'work' as comprehensively as possible.

It then continues to think. Whether it fails to find the work or lacks sufficient information, it employs different tools to search for artworks, locations, images, and further pinpoint details about the work and the artist. It then continues to think about the user's needs, such as the artist's style and other works.

Image source: Leikeji

This Agent-based working method also explains why Chance AI can recognize a partial photo of a beer keg, while mainstream AI large models like Doubao or Gemini cannot. This doesn't imply that Chance AI surpasses AI giants at the large model level; rather, it's the first to apply the Harness Engineering framework to the visual domain.

The same principle applies to OpenClaw, which further refines the Harness and Skill mechanisms.

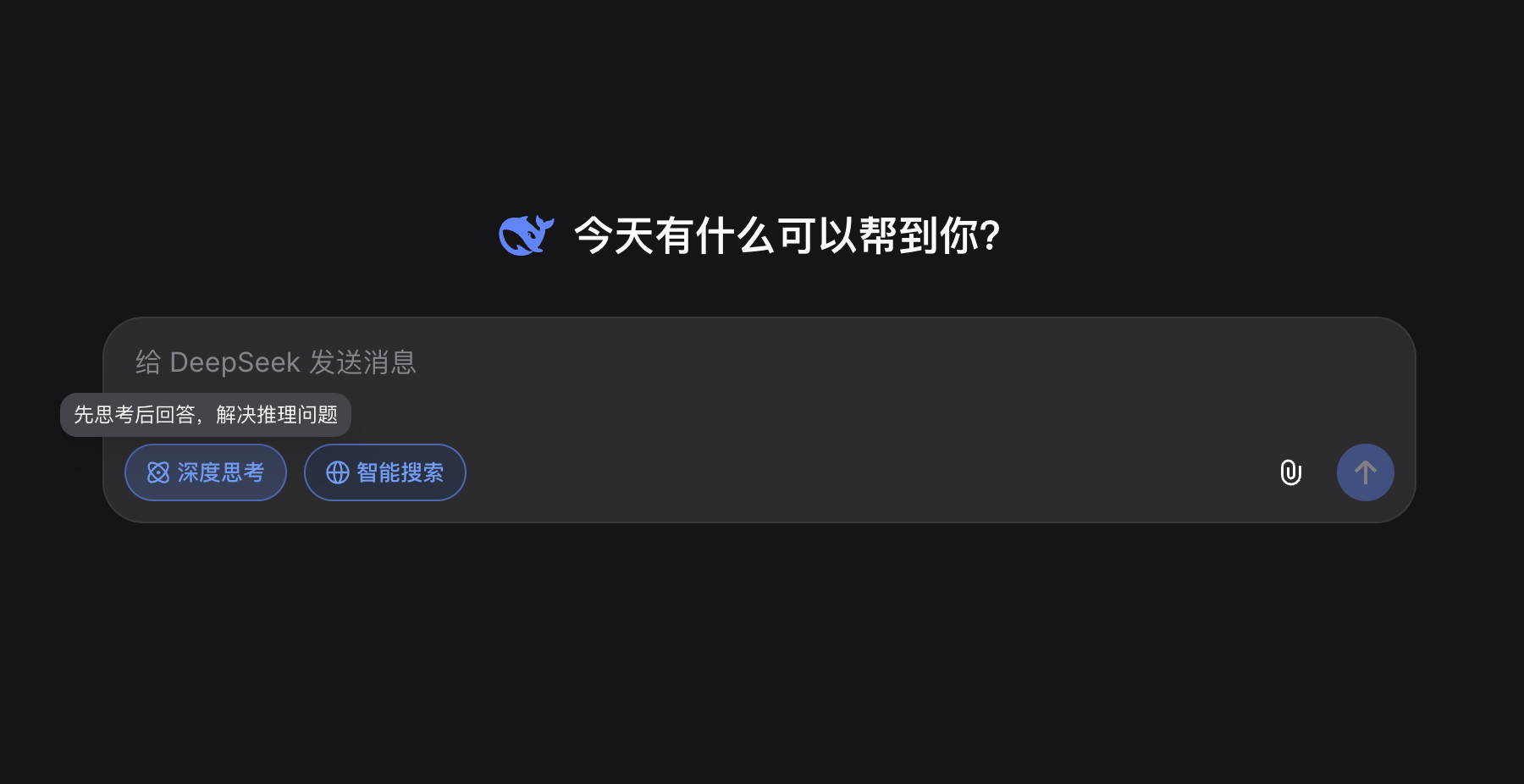

Despite exceeding expectations in terms of working ability, Steinberger, the creator of OpenClaw, didn't independently train a large model. Instead, he built a relatively reliable Agent framework on top of existing large models, or as Professor Huang Chao described it, a 'scaffold' or 'lightweight operating system.'

This has been a frequently discussed technical trend this year.

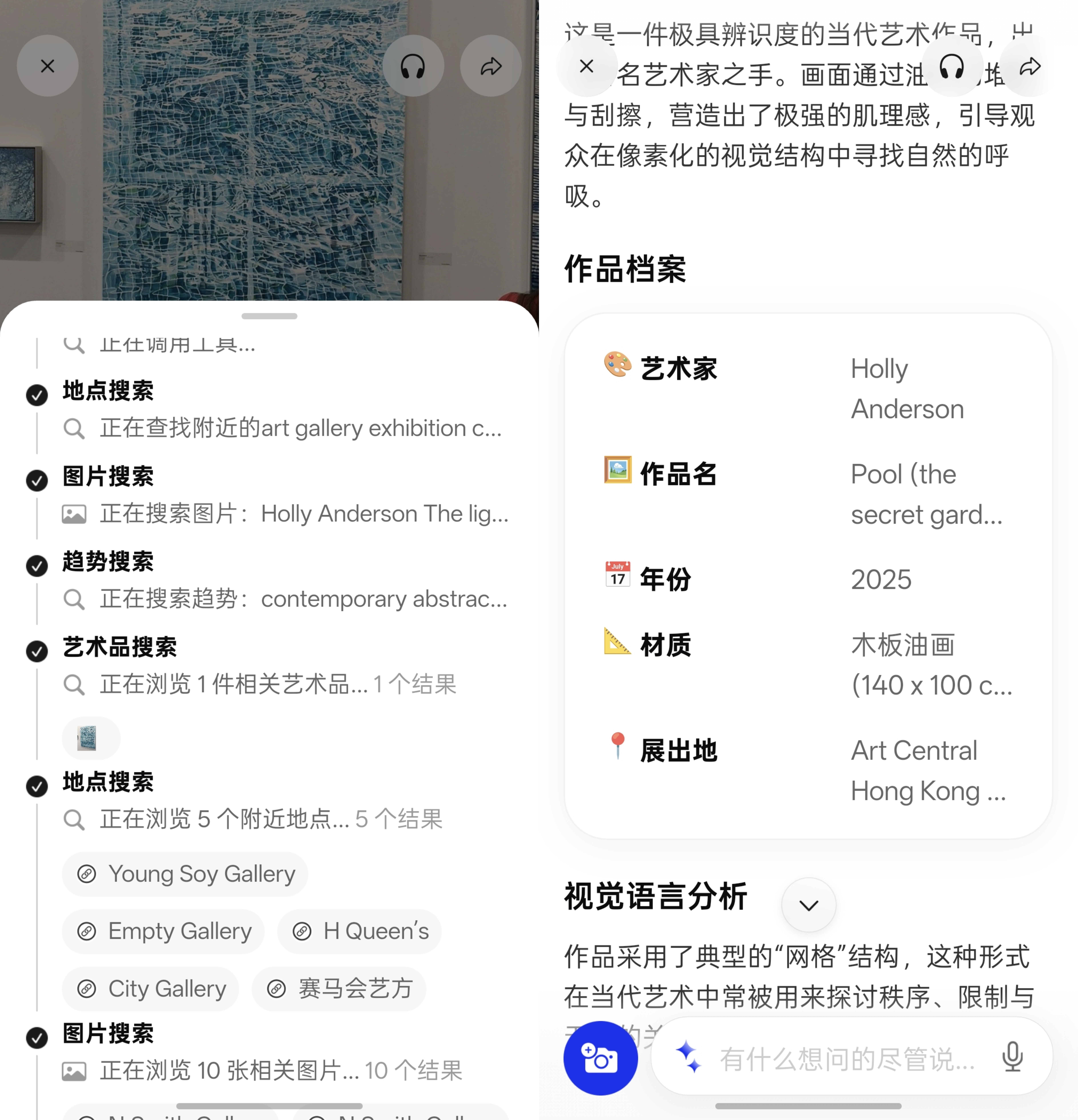

Harness, literally meaning 'to harness,' and Harness Engineering can be understood as the engineering of 'harnessing large models,' encompassing context engineering, long-term memory management, and tool invocation. Skill, on the other hand, might be more familiar to the public, such as the Alipay payment integration Skill launched by Alipay on March 31, which enables Agent AI to directly integrate Alipay's payment capabilities.

Image source: Alipay

However, beneath these technical advancements lies a more fundamental shift: what Lin Junyang refers to as 'Agentic Thinking.'

In reality, Agent AI relies even more on 'thinking,' but its thinking is embedded in various operations and processes. This is the essence of Agentic thinking: not contemplating everything before acting but continuously revising ideas during the process.

You'll notice that OpenClaw and past large models operate in vastly different 'working styles.' They don't just provide results upfront (non-reasoning models), nor do they gather information and then ponder extensively before answering (pure reasoning models). Instead, they invoke tools and interact more, conducting multiple rounds of searching, verification, decision-making, and adjustment. Thus, with the large model unchanged, Agent products like OpenClaw can better address real-world problems.

Image source: Leikeji

Consider another seemingly simple task: fixing a bug in a project. You're unsure where the problem lies, which line of code to modify, or whether a single change will resolve it. In this scenario, simply extending the 'reasoning chain' isn't particularly helpful. The key isn't 'thinking more comprehensively' but constantly testing and adjusting your approach.

As Luo Fuli said, '(OpenClaw) guarantees a lower bound while also raising the upper bound.'

Agentic Thinking: Once Embraced, Hard to Abandon?

Rewinding to 2024, the primary focus in the large model industry was enhancing models' 'reasoning' capabilities. Represented by OpenAI o1 and DeepSeek R1, this generation of large models began systematically lengthening reasoning chains, trading longer thinking times for higher accuracy.

In relatively closed problems like mathematics and coding, this approach was almost immediately effective, with models no longer just 'guessing answers' but starting to 'solve problems.' This is why large models were predominantly associated with 'reasoning' at the time.

However, pure reasoning models like DeepSeek R1 implicitly assume that problems can be 'thought out.' In other words, information is relatively complete, goals are clear, and paths can be derived through reasoning.

Image source: DeepSeek

But the real world isn't a questionnaire. When tasks shift from 'solving a problem' to 'getting something done,' information is no longer complete, goals aren't always clear, and the process can't end with a single derivation. AI needs to constantly experiment, revise paths, and even reevaluate the problem itself during the process.

Even for 'desk work' like writing reports, the real world demands multiple rounds of information gathering, thinking and reasoning, tool invocation, and evaluation and decision-making. This is why, when products like OpenClaw, Claude Code, and others emerged, many people realized for the first time that 'being able to reason' and 'being able to work' are two distinct abilities.

The key change isn't in the model itself but in introducing a set of mechanisms around the execution process.

Harness doesn't handle thinking itself but determines how thinking is organized. It decides when to continue reasoning, when to execute, whether to backtrack or change paths after failure. It breaks the once-through reasoning process into a repeatable loop. Skill, on the other hand, transforms various capabilities into modules that AI can invoke at any time, providing clear operational options. The model no longer needs to provide direct answers but chooses which capability to invoke at what moment.

This might seem like a mere process change, but the result is endowing models with the ability to handle 'uncertain problems.' Thus, with the same large model foundation, performance can vary significantly across different systems. Products like OpenClaw or Claude Code, when facing complex tasks, aren't necessarily 'smarter' but can keep trying, revising paths, and utilizing tools until they achieve a feasible outcome.

However, the core driver of this change isn't just technology itself but demand.

E.g., researching Xiaohongshu. Image source: Leikeji

When users first interacted with large models, they expected a tool that could answer questions. After reasoning capabilities improved, the expectation shifted to 'more accurate answers.' But today, this expectation has further evolved into 'true agency,' directly acting as an 'AI colleague' to help us accomplish tasks.

No one is satisfied with an AI that merely answers questions. Whether writing code, searching for information, or handling tasks, what's truly valuable isn't 'telling me what to do' but 'doing it for me.'

Under such demand, large models can no longer just be reasoning machines but must become systems capable of participating in execution. This is why the shift from reasoning-style thinking to Agentic thinking isn't even a technical route choice but an almost inevitable transition.

Agent AI Large Models

Source: Leikeji

All images in this article are sourced from the 123RF licensed image library.