Lu Qi Invests in a Post-90s Entrepreneur’s Venture, Backing Three Rounds of Financing

![]() 04/15 2026

04/15 2026

![]() 485

485

Ever wondered why robots seem sluggish in their responses? The culprit is their limited "vision."

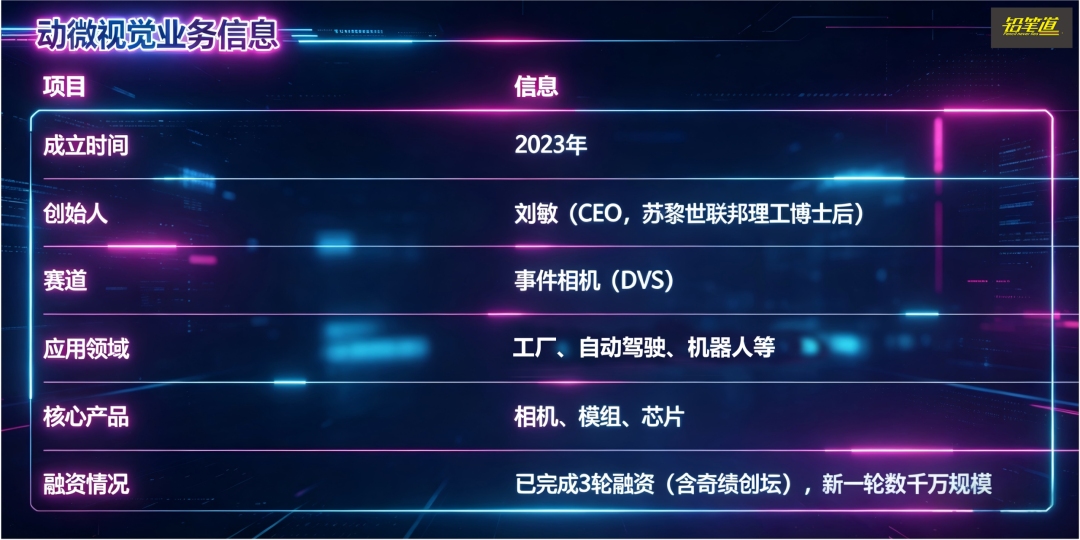

Recently, a promising future unicorn has emerged in this domain, securing three consecutive rounds of financing—including an investment from Lu Qi, founder of MiraclePlus. The company is Chronocam.

Chronocam’s flagship product is an ultra-responsive "camera"—the event camera (DVS). Unlike traditional cameras, it largely ignores stationary objects and instantly captures any movement.

The DVS technology was invented by Professor Tobi Delbruck from ETH Zurich. Chronocam’s founder, Liu Min, is one of Tobi’s doctoral students. In its first year of commercialization (2025), Chronocam achieved nearly 10 million yuan in revenue.

Pencil News recently spoke with Dr. Liu Min about the opportunities in this sector. Here are the key takeaways:

1. What products does Chronocam offer?

Answer: We specialize in core components for event cameras—cameras, modules, and chips. We do not manufacture complete devices.

2. What are the limitations of traditional cameras?

Answer: Two major flaws: they struggle to capture fast movements clearly and perform poorly in extremely bright or dark conditions.

3. Why pursue this technology now?

Answer: The technology has been maturing for 15 years and is finally ready for commercialization.

4. Which industries face the greatest challenges?

Answer: Industrial automation, autonomous driving, robotics, and smartphone photography, among others.

5. How many companies operate in this field?

Answer: Globally, there are about five or six, mostly founded by students of my advisor.

The following is a self-narrative by Liu Min:

- 01 - A Postdoctoral Born in 1991

I returned to China in 2023. Born in 1991 in Yichun, Jiangxi (Yuanzhou District), I previously worked in academia at ETH Zurich, from my doctorate to postdoctoral research.

The product we are developing now is like a highly sensitive "electronic eye"—a new type of event camera (DVS). It focuses solely on "changes," ignoring stationary objects and instantly capturing any movement or changes in lighting.

What problems can it solve for customers? For example, factories can use it to detect product defects.

How did I discover this opportunity?

Because the technology was ready. I have been working on event cameras (DVS), a technology invented by my advisor, Professor Tobi from ETH Zurich, in 2008. By the time I started my doctorate in 2016, nearly a decade had passed, but the technology remained largely confined to the lab—few people knew how to use it, and applicable scenarios were scattered and not yet mainstream.

At the time, I felt it was similar to early CMOS (image sensor chips). CMOS was invented in the 1990s but remained niche until the iPhone’s release in 2007, which triggered a smartphone boom and led to massive shipments of CMOS sensors.

Technological breakthroughs require application scenarios to ignite them.

So when I returned to China in 2023, I felt that event cameras were at the "pre-iPhone" stage—the technology was mature, just waiting for a breakthrough opportunity.

Just as I was preparing to start my venture, MiraclePlus proactively reached out to me and expressed support for this direction. With the technology cycle reaching maturity and early-stage capital willing to take a chance, the two factors aligned, and I returned to China to start my business.

When I spoke with MiraclePlus, I didn’t tell a grand business story. They invest in "people" and "technology"—ETH Zurich’s reputation is strong, the direction is cutting-edge, and the team is well-matched. That was enough.

But I was very clear myself: the ultimate goal is to break into autonomous driving and robotics.

Both fields require vision, but traditional cameras (RGB cameras) currently in use have a major flaw—they are like the "static vision" of the human eye, capable of seeing details but slow to react to rapid changes.

Event cameras excel at this—they only respond to changes and motion, making them particularly suitable for handling sudden situations like "ghost pedestrians."

- 02 - Accelerating Reactions

What pain points existed in the industry before?

The first pain point: factory scenarios.

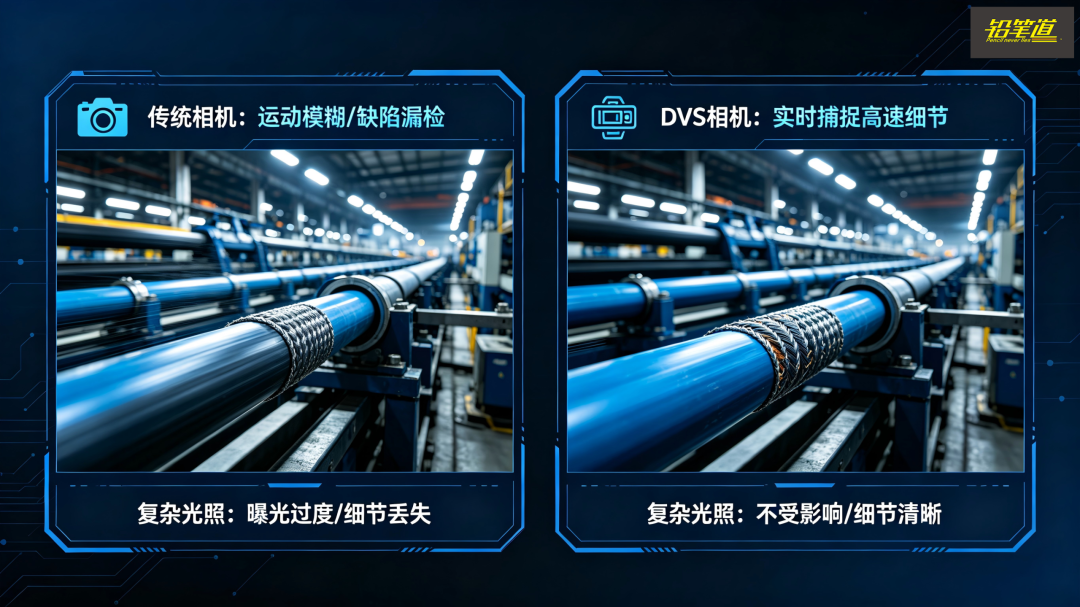

Traditional cameras struggle with two types of tasks in factories.

One is speed. On production lines for optical cables or wires, the speed is extremely high. While traditional cameras are still capturing frames one by one, product defects have already been missed.

DVS, on the other hand, almost instantly detects changes, with a response speed thousands of times faster than traditional cameras, allowing it to capture details during high-speed processes.

The second is the impact of complex lighting.

Factories often have strong light, reflections, and alternating bright and dark conditions. Traditional cameras are prone to distortion and missed detections in such environments. However, DVS has a high dynamic range and is more "resistant" to complex lighting, remaining largely unaffected.

The second pain point: autonomous driving, especially sudden events like "ghost pedestrians."

Everyone is talking about vision-based solutions now, such as Tesla’s pure vision approach. I agree that vision can enable autonomous driving, but the problem is that current "vision" systems are not human-like enough.

Human vision actually consists of two systems: one for "seeing clearly," such as identifying details and recognizing objects, and another for "detecting changes," such as noticing sudden movements.

Current RGB cameras are essentially more like the former—they are static-oriented.

DVS fills this gap. It only focuses on changes and motion, instantly detecting who or what has moved or changed.

For example, in autonomous driving, common scenarios like "ghost pedestrians" or sudden appearances of people or objects are difficult for RGB cameras to react to in time, whereas DVS has a clear advantage.

The third pain point: robotics—currently, there are two main issues.

1. Speed. Traditional cameras are not fast enough, causing robots to react slowly.

2. Power consumption. Robots have limited computational resources, but RGB cameras capture and process all frames, generating massive amounts of redundant data, which consumes more computational power and energy.

Current robots process all information without any filtering mechanism.

We are currently working on an interesting direction—sports robots. Scenarios like badminton, table tennis, and tennis require extremely fast reactions. The problem with robots now is not inflexibility but "not knowing where to move."

You can move in a second, but only if you first perceive the ball’s trajectory. So essentially, we are solving the problem of robots’ "insufficient perception speed."

The fourth pain point: smartphone photography.

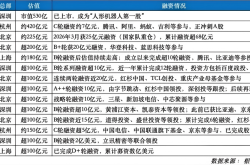

Smartphone manufacturers like Xiaomi, Huawei, and OPPO are actually testing similar technologies. The application scenario is "deblurring."

Blurry photos actually fall into two categories: one is camera shake, which can now be addressed quite well through stabilization; the other is when the subject is moving, such as photographing pets, which DVS can handle better.

- 03 - Global Players: 5-6

Globally, there are very few players in this sector: about five or six. Around two in China and three abroad, mainly in Europe.

Interestingly, the founders of these companies are mostly my senior labmates, either having pursued their doctorates or postdoctoral research at ETH Zurich, all under the same advisor, Professor Tobi. We share the same technological roots.

In recent years, teams from Peking University and Fudan University in China have also started researching this direction and even incubating companies, but overall, those of us from the "ETH Zurich camp" are still the most deeply involved.

We "sibling companies" communicate frequently. Since the industry is still in its early stages, there is no real competition yet—we haven’t reached a life-and-death stage.

Instead, we discuss which scenarios to tackle together and how to promote the technology. We share a consensus—first, let more people know about DVS and grow the market, rather than fighting each other.

Some industries are also paying attention to this sector, such as autonomous driving, robotics, and even consumer electronics.

From an industrial logic perspective, for example, in autonomous driving, if the technology is adopted in vehicles, automakers typically require at least three suppliers. So even if it comes to fruition, it will likely be a shared market among us few companies, rather than a monopoly.

So currently, our relationships are quite good.

- 04 - Which Customers Are Buying

After developing the product, which customers are more willing to pay?

For example, in optical cable inspection, there was no previous solution, or existing ones were ineffective, so many production lines lacked such systems. By introducing our solution, we help them fill this capability gap.

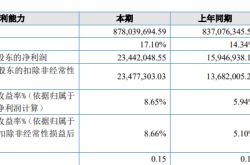

In these scenarios, our gross margin can exceed 80%, and no one else can do it yet. In the future, if the industry grows, prices may drop, but as long as we maintain a lead of "half a step to a full step," we won’t worry about competition.

Of course, there are industries where traditional RGB cameras already perform well, such as 3C, semiconductors, and batteries. We won’t compete head-on in those areas.

Why do customers buy? Ultimately, it comes down to one thing: cost-benefit analysis. They care less about how advanced the technology is and more about whether it can help them reduce costs.

For example, in the optical cable industry, their return rate might have been close to 10% in the past, representing significant losses. Our equipment, costing around a hundred thousand yuan, can achieve a defect recognition rate of over 90% with virtually no false positives. Moreover, it identifies and addresses issues before products leave the factory.

The benefits go beyond reducing returns—more critically, it helps avoid losing major clients. For a customer like State Grid, one mistake could mean being cut off entirely, so this represents a very clear ROI.

Currently, market acceptance of event cameras is growing.

When I first returned to China in 2023, I basically had to explain the technology to every client I met—no one had heard of it.

But now, about 20%-30% of clients have already heard of event cameras and even reach out to us proactively. However, even if they’ve heard of it, the boss’s top concern remains the same—can it help me make money and reduce costs?

Our company’s revenue is also gradually increasing. Founded in 2023, we had virtually no revenue in the first two years, focusing mainly on R&D. Last year, we reached nearly 10 million yuan, and this year’s goal is 20 to 30 million.

Industry is our current customer base, but we are actively expanding into autonomous driving and robotics, which are our key focus areas.

Especially in autonomous driving, everyone knows the two mainstream sensors now: cameras and LiDAR. LiDAR’s advantage is clear—it provides precise ranging and can determine the shape and distance of objects.

But the problem is that neither LiDAR nor traditional cameras are designed for "high-speed dynamic scenarios." For example, RGB cameras capture 30 frames per second, which is sufficient for the human eye but not for vehicles.

We are also currently raising a new round of financing, aiming to close it in the first half of the year, with a target of several tens of millions.

This article does not constitute any investment advice.