Industry | Hotter than HBM, the imminent mass production of SOCAMM2 intensifies competition

![]() 04/17 2026

04/17 2026

![]() 574

574

Preface:

Over the past three years, HBM has remained the undisputed leader in the semiconductor industry. However, as 2026 approaches, SOCAMM2 is heating up at a pace far exceeding industry expectations, even surpassing HBM4, which is currently in its production ramp-up phase.

SOCAMM2 Emerges as a Key Player in AI Storage

SOCAMM2, or Small Outline Compression Attached Memory Module 2, is a new-generation enterprise-grade memory standard being finalized by the JEDEC Solid State Technology Association.

Its core architecture combines the mature LPDDR low-power memory technology from consumer electronics with the modular design of CAMM compression-attached memory modules.

Through a 4-N-4 HDI ultra-high-density interconnect stack structure, it achieves breakthroughs in four key areas: performance, power consumption, size, and maintainability.

From a core parameter perspective, the current mass-produced version of SOCAMM2 already demonstrates comprehensive superiority over traditional DDR5 memory.

The single-pin data transfer rate can reach up to 9600MT/s, a 12.5% improvement over the first-generation SOCAMM and 1.7 times that of mainstream DDR5 RDIMMs.

A single module can support up to 256GB of capacity, with a single 8-channel server CPU capable of supporting up to 2TB of memory, sufficient to handle inference demands for large models with million-scale token long contexts.

Under the same capacity, SOCAMM2 consumes only one-third of the power of standard DDR5 RDIMMs and reduces physical size by two-thirds.

It also eliminates the protruding trapezoidal structure on top, reducing overall height by approximately 15%, making it perfectly suited for the liquid cooling system layouts prevalent in current AI servers and resolving airflow obstruction issues caused by traditional RDIMM memory.

The core value of SOCAMM2 lies in addressing the industry's key pain point of enabling AI to [remember more content while consuming less power].

If HBM is the [blood vessels] of AI servers, responsible for delivering data to GPUs at ultra-high speeds,

then SOCAMM2 is the [muscle] of AI servers. Built around the CPU, it processes large-scale data while significantly reducing power consumption.

This positioning difference ensures that SOCAMM2 will not replace HBM but rather complement it, jointly supporting the next generation of AI infrastructure.

SOCAMM2 precisely fills the market gap between HBM and traditional DDR5, achieving a balance of capacity, power consumption, and flexibility through its replaceable, cost-effective modular design.

Thus, the rise of SOCAMM2 is the result of the convergence of three industrial forces: changes in AI application structures, HBM supply bottlenecks, and data center energy consumption pressures.

Market Opportunities Created by HBM Capacity and Pricing

Over the past two years, HBM capacity has consistently fallen short of demand, with Samsung and SK Hynix, two South Korean manufacturers, monopolizing over 90% of global HBM production.

By 2026, HBM4 capacity has already been fully pre-locked by leading players such as NVIDIA and AMD, leaving smaller manufacturers without access to stable supplies.

Meanwhile, HBM prices remain high, exceeding $10 per GB—3-4 times that of ordinary DDR5 memory—making it difficult for many cost-sensitive inference and edge computing scenarios to afford.

In contrast, SOCAMM2 offers significant cost advantages, with a per-bit price premium of only about 30% over ordinary LPDDR chips, far lower than HBM's steep premium.

Its production is based on mature DRAM processes and traditional packaging techniques, eliminating the need for advanced packaging technologies like TSV (Through-Silicon Via), thus avoiding capacity constraints associated with advanced packaging and enabling rapid large-scale production.

While HBM determines the upper limit of AI capabilities, SOCAMM2 determines whether AI can truly be popularize (popularized) across industries.

What appears to be a competition in storage technology is, in fact, a redistribution of power in AI system architecture.

Riding the Structural Inflection Point of the AI Industry

SOCAMM2 precisely captures the structural inflection point in the AI industry, transitioning from a [training arms race] to [commercialization of inference], addressing the most pressing pain points for current data centers and AI vendors.

The core bottleneck for long-context inference lies in memory.

During the inference process of Transformer architectures, KV caches store key-value pairs for each token, with memory usage growing linearly with context length.

The insufficient bandwidth of traditional DDR5 RDIMMs directly leads to a significant increase in Time to First Token (TTFT), severely degrading user experience.

This scenario is where SOCAMM2 excels, dramatically improving response speeds for long-context inference while supporting more concurrent users, directly lowering the deployment threshold for commercializing large models.

Currently, global AI data centers face triple pressures of power consumption, rack density, and Total Cost of Ownership (TCO) over their lifecycle, with traditional DDR5 memory architectures reaching their limits.

A dual-socket AI server equipped with 16 DDR5 RDIMMs consumes over 200W at full memory load, whereas SOCAMM2 consumes only about 70W for the same capacity.

When deployed at the rack scale, memory alone can reduce cooling and power costs by over 30%.

The trend in AI servers is toward high-density integration and widespread liquid cooling adoption. Vertical DDR5 RDIMMs not only occupy significant space but also obstruct airflow in liquid cooling systems.

SOCAMM2's flat, compression-attached design is only one-third the size of RDIMMs, increasing the number of memory slots per server by 50%.

It also perfectly adapts to cold plate and immersion liquid cooling systems, boosting compute density per rack by over 30%.

Additionally, UBS estimates that SOCAMM2 can reduce TCO for AI servers by over 20% over their lifecycle.

Market research firm Omdia predicts that the server module market, including SOCAMM2, will exceed $2 billion by 2027, growing at an average annual rate of over 60%.

Another agency, Market Research Intellect, forecasts that the low-power DRAM market, including SOCAMM, will grow at an average annual rate of 8.1% through 2033, reaching $25.8 billion in market size.

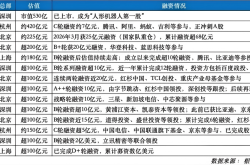

Mass Production Imminent: Global Players Intensify Competition

With the impending finalization of JEDEC standards and the approaching mass production of NVIDIA's Vera Rubin platform, competition in the SOCAMM2 market has reached a fever pitch.

In the core SOCAMM2 track (sector), three DRAM giants—Micron, Samsung, and SK Hynix—are locked in direct competition, each with distinct technological approaches and market strategies, and all entering the final sprint phase for mass production.

Micron is the pioneer in the SOCAMM2 track (sector), recently launching the world's first 256GB SOCAMM2 module and announcing at NVIDIA GTC 2026 that the product has entered large-scale mass production, becoming the primary supplier for NVIDIA's Vera Rubin platform.

Its core advantage lies in the 1γ DRAM process technology, enabling single-die 32Gb LPDDR5X chips that achieve higher capacity in smaller sizes.

Samsung announced this month that it has overcome module warping issues through its proprietary next-generation Low-Temperature Solder (LTS) technology.

By reducing soldering temperatures from above 260°C to below 150°C and optimizing single-stack chip layouts and EMC epoxy molding compound thermal expansion characteristics, it has significantly improved product mechanical rigidity and yield.

With stable yields and production capacity (capacity) advantages in the 1c nm process, Samsung aims to secure about 50% of NVIDIA's SOCAMM2 supply share, forming a duopoly with Micron.

SK Hynix currently prioritizes HBM4 capacity allocation, with relatively limited SOCAMM2 production.

However, leveraging its deep technical expertise in DRAM, SK Hynix has initiated customer sampling for SOCAMM2 while focusing on the high-end market through iterations of its 1c DRAM process.

Maintaining close collaboration with AMD and Intel's next-generation server platforms, it avoids direct competition with Micron and Samsung in NVIDIA's supply chain, establishing its own market advantages.

Domestic manufacturer Longsys has developed its own SOCAMM2 products based on LPDDR5/5X chips and a 4-N-4 HDI ultra-high-density interconnect stack structure, further optimizing module height. With a maximum single-module capacity of 256GB and a data transfer rate of 8533Mbps, its performance metrics approach those of international peers.

Driving New Opportunities Upstream in the Supply Chain

The rise of SOCAMM2 is also driving technological upgrades and market opportunities across the upstream supply chain, from PCB substrates and connectors to packaging materials, with all segments entering a phase of intensive capacity deployment and technological breakthroughs.

SOCAMM2 connectors feature 644 pins, imposing extremely high requirements on high-frequency, high-speed signal integrity, mechanical precision, and reliability.

Currently, international connector giants like Amphenol and TE Connectivity dominate the market, while domestic manufacturers such as Xingwanlian have achieved mass production breakthroughs for CAMM2 connectors and entered the supply chains of domestic server vendors.

PCB substrates serve as the core carrier for SOCAMM2, with significantly elevated technical barriers.

Domestic manufacturers such as Shengyi Technology, Shennan Circuits, and WUS Printed Circuit have achieved breakthroughs in high-frequency, high-speed copper-clad laminates and advanced HDI boards.

High-end materials like low-temperature solders for warping control and high-reliability EMC epoxy molding compounds are currently dominated by Japanese and U.S. companies, while domestic manufacturers have achieved mass production in mid-to-low-end materials and are now challenging the high-end market.

Industry Trends Driving the Rise of SOCAMM2

① The optimization focus of AI infrastructure is shifting from compute-centric to system energy efficiency. As the frenetic expansion of compute power during large model training slows, cost control during inference becomes critical. The power consumption advantages of SOCAMM2 hold decisive economic significance amid rising electricity costs.

② The boundaries between memory and computing are blurring. The modular design of SOCAMM2 and its potential future integration of computing functions suggest that memory is evolving from a passive storage unit to an active computing node.

③ Supply chain security considerations are reshaping the competitive landscape. NVIDIA's simultaneous support for Samsung, SK Hynix, and Micron as suppliers, along with its differentiated allocation between HBM and SOCAMM2, reflects the AI chip giant's strong emphasis on supply chain resilience.

Conclusion:

The value of technological innovation lies not only in creating new performance peaks but also in finding the optimal balance between efficiency and cost.

SOCAMM2 is transitioning from technical validation to large-scale deployment. On the surface, this competition appears to be a technological contest among storage vendors, but beneath it lies a struggle for dominance over the next-generation AI server architecture standards among compute power giants.

Partial source references: Korea Economic Daily: "Industry Prepares for AI Transformation Brought by Rubin," IT Home: "NVIDIA Cancels Promotion of First-Generation SOCAMM Memory, Shifts Development Focus to SOCAMM2 New Version," Semiconductor Industry Observations: "This Type of Storage Becomes the New HBM," Semiconductor Industry Review: "AI Memory Newcomer, SOCAMM2 Debuts."