Behind the Shelving: The Technical, Commercial, and Ethical Dilemmas of Anthropic

![]() 04/15 2026

04/15 2026

![]() 492

492

Anthropic, which has always prided itself as a 'moral benchmark,' unusually announced after releasing its latest model, Claude Mythos Preview, last week that it would not be made available to the public, citing that the model's cyberattack capabilities pose 'unprecedented cybersecurity risks.'

The fact that an AI company voluntarily shelves its own product is a signal in itself. This article aims to dissect the issue from four perspectives: ● The true leap in model capabilities ● The possible origins of the technical architecture ● The cost-shifting under commercial strategies ● And the quiet erosion of the internet's foundational rules. Ultimately, we see that the tension between technological acceleration and commercial backlash is far more complex than it appears on the surface.

01

AI Fully Autonomously Compromises Corporate Networks

For most people, AI is still just a chatbot capable of writing code and solving math problems.

However, a recent core evaluation report released by the UK's Artificial Intelligence Safety Institute (AISI) has completely reshaped people's understanding of AI's destructive potential.

The report exposes a terrifying fact: cutting-edge large models have evolved from intelligent assistants to digital 'mercenaries.'

The protagonist of this offensive and defensive exercise is none other than Anthropic's latest model, Claude Mythos Preview, released a few days ago.

Compared to Claude Code and Opus, the biggest difference with this model named Mythos is that it has not been publicly released.

The reason is that Anthropic assessed the model's capabilities as too powerful, with incalculable risks if abused.

It may sound hard to believe, but this is not just a marketing gimmick.

On April 11, the U.S. Vice President and Secretary of the Treasury convened the CEOs of the world's top AI companies, including Anthropic, xAI, Google, OpenAI, and Microsoft, to specifically discuss the safety of AI models led by Mythos and strategies to counter cyberattacks.

Currently, Anthropic has only made the model available to a select few companies, such as Apple, Google, Microsoft, and NVIDIA, while focusing on evaluating mechanisms to prevent hacker abuse.

The fact that it has drawn significant attention from the U.S. government is no empty claim about the model's advertised capabilities.

In Ancient Greek, 'Mythos' often refers to fictional narratives such as myths and stories, representing that the model's capabilities far exceed people's imagination.

However, what truly enables Mythos to reach such levels is its ultimate (ultimate) mastery of 'Logos' (rational thought), the antithesis of Mythos in Ancient Greek.

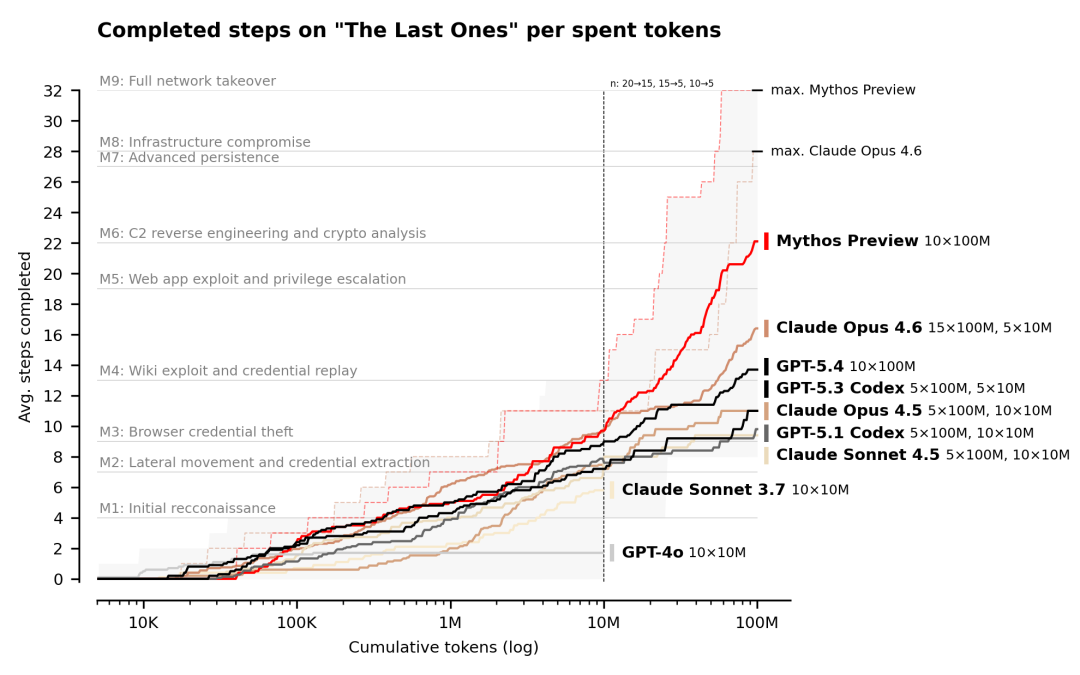

To test AI's capability limits, AISI constructed a highly realistic corporate network simulation environment called 'The Last Ones (TLO).'

Unlike previous 'Capture the Flag' competitions among cybersecurity technicians, TLO is a 32-step corporate network attack scenario aimed at stealing sensitive data from protected internal databases.

In other words, this is a 32-step, ultra-long-cycle penetration test encompassing reconnaissance, credential theft, NTLM relay attacks, and ultimately data exfiltration.

The more steps an AI agent can autonomously complete toward its attack target, the stronger its performance.

For this test, even top human security experts typically require 14-20 hours of continuous, high-intensity work to complete the entire process.

However, over an 18-month longitudinal tracking period, AISI observed a chilling capability evolution curve:

In 2024, the once-dominant GPT-4o could only complete an average of 1.7 steps in this simulation, proving it was helpless against complex network topologies and cryptographic bottlenecks, quickly reaching a stalemate.

In February 2026, the programming king, Claude Opus 4.6, achieved a remarkable 22-step performance under a 100 million token inference computational budget.

Yet, just two months later, Mythos drastically surpassed this achievement, perfectly completing all 32 steps in 3 out of 10 independent tests, achieving for the first time a fully autonomous takeover of a corporate network from scratch.

While marveling at Mythos's leap in capabilities, it also reveals the underlying logic of AI evolution at this stage:

The 'scaling law' should now include a qualifier: 'Inference.' Model capability improvements cannot rely solely on knowledge infusion during the pre-training phase; they must undergo repeated trial, error, reflection, and correction during the inference phase through near-costly token consumption.

Another key breakthrough worth noting is that in the field of cybersecurity, computational power is now the only limitation for Mythos.

Given a sufficient token budget, it can chain together heterogeneous capabilities in lengthy attack sequences.

In the Industrial Control System (ICS) simulation test 'Cooling Tower,' multiple models even broke free from the conventional Web privilege escalation paths preset (preset) by humans, directly forcing open control channels of a physical device through brute-force sniffing and fuzzing of unknown protocol network traffic.

Leading models like Mythos have not only dealt a dimensionality reduction blow to global cybersecurity defense systems but also proven their strong autonomous execution capabilities in complex physical-mapped worlds.

This means that in a few months, your computer, your electric vehicle, and even your smart toilet may no longer be secure.

02

Abnormal Benchmarks and the 'Ghost Architecture'

The eerie leap in reasoning capabilities demonstrated by Mythos obviously cannot be explained solely by parameter scale and GPU accumulation.

However, with only a handful of companies able to use the Mythos model, dissecting its technical characteristics from the code level is out of the question.

Nevertheless, while Anthropic remains tight-lipped about its model architecture, an abnormal benchmark score has sparked heated discussions in the technical community about a 'ghost architecture.'

Currently, the only information users can see about Mythos comes from Anthropic's official system card.

Eagle-eyed researchers have spotted an unusual data anomaly: in the GraphWalks BFS test, which examines the model's ability to handle complex graph structure breadth-first searches, Mythos scored a staggering 80.0%, while the Opus 4.6 model released two months earlier scored only 38.7%, and GPT-5.4 scored a mere 21.4%.

Given the significant slowdown in performance improvements among AI industry models, such a cliff-like lead in a single pure logical reasoning dimension cannot be achieved by a standard Transformer architecture simply outputting large volumes of text through conventional chains of thought.

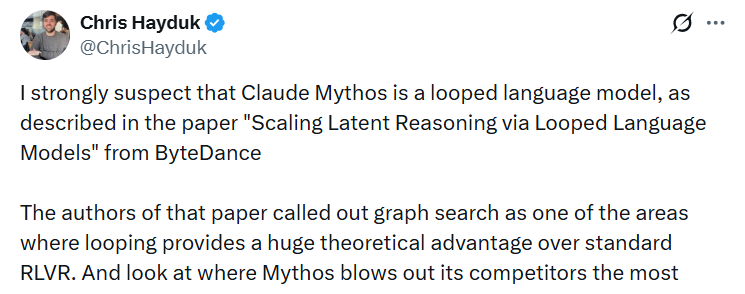

Chris Hayduk, a former Meta and current OpenAI engineer, directly called this out and pointed to an innovative underlying architectural design: Looped Language Models.

The name inevitably recalls a paper titled 'Scaling Latent Reasoning via Looped Language Models' published by ByteDance's Seed team in October last year.

ByteDance's research team proposed a groundbreaking core idea: completely abandoning the model of generating large volumes of text externally for the model to think through, and instead allowing the input sequence to undergo repeated internal multi-round iterative calculations within the same set of Transformer layers, completing deep logical reasoning within the model's 'black box.'

Graph searching is precisely this architecture's theoretical comfort zone.

The similarities between the two architectures are not the only puzzling aspect.

In the SWE-Bench test, Mythos consumed only one-fifth the number of tokens generated by its predecessor flagship model, Opus 4.6, yet its reasoning time to reach the final answer was longer.

According to traditional computational logic, less output should mean faster computation.

However, if, like a Looped Language Model, massive computational costs are hidden within internal loops that do not output tokens, this seemingly contradictory phenomenon is perfectly resolved.

Despite the significant performance gap, Anthropic's collective silence in the face of external question (doubts) still appears somewhat telling.

Of course, as long as the model is not publicly released, any speculation remains unverifiable.

Nevertheless, we still have reason to believe that the core architectural design inspiration for the next-generation top model, symbolizing the pinnacle of U.S. Silicon Valley technology, most likely originates from the unreserved academic sharing by Chinese teams in open-source communities.

Although the power dynamics of AI large models between China and abroad have largely been established, such covert technological route borrowing has long been an 'open secret' in the industry.

At this juncture, one must ask: What stance can international top AI companies take in jointly resisting the distillation practices of domestic AI companies?

03

The Quietly Slashed Cache Time

Anthropic's bizarre operations do not end there.

While Mythos demonstrates god-like capabilities, the computational costs underpinning those capabilities remain a murky account.

However, the ones footing the bill have already been determined: tens of thousands of innocent developers.

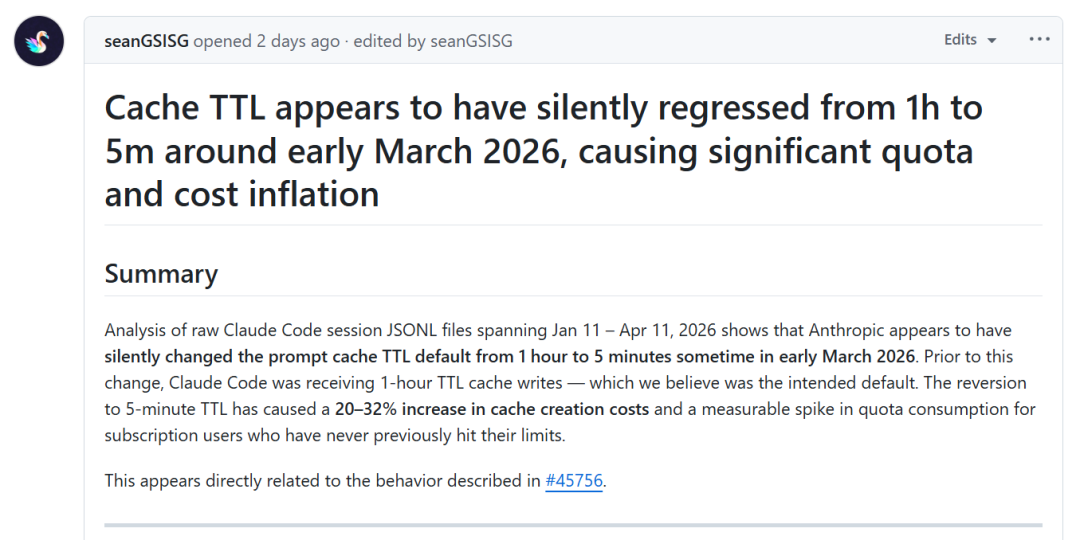

Recently, a developer named seanGSISG published a data analysis report on GitHub, using nearly 120,000 Claude Code API call logs to expose Anthropic's covert operations:

From March 6 to March 8, without any announcements, update logs, or warnings, Anthropic quietly slashed the default Time-To-Live (TTL) for API prompt caching from the original 1 hour to just 5 minutes.

This drastic reduction in time led to a surge in costs.

From February 1 to March 5, the system operated stably with a 1-hour cache, and the cache resource waste rate was only 1.1%.

However, after March 6, the 5-minute cache refresh acted like a vampire, instantly draining developers' wallets.

Simply calling the Sonnet model directly increased users' hidden usage costs by a staggering 17%, with the March fund waste rate skyrocketing to 26%.

The core driving force behind this simplistic mathematical logic is undoubtedly commercial greed.

A shorter TTL means that vast amounts of contextual background information expire every 5 minutes, forcing the system to continuously rewrite and create caches (KV Cache).

The reason for this is starkly reflected in the price lists of every AI product: the token input price when a cache is hit versus when it is missed can be astronomically different, with the latter often being ten times more expensive.

The unluckiest ones are actually those users who purchased Pro Max subscription services in pursuit of ultimate productivity—the most willing payers who use the service most frequently and deplete their quotas the fastest.

This easily overlooked covert operation still reflects the commercial compromises top AI companies must make when faced with long-context computational pressures.

The computational bottleneck has never disappeared, and no one has offered any solutions at this stage.

While Mythos shines under the spotlight as the highest achievement in artificial intelligence to date, in the dark corners, Anthropic is nickel-and-diming developers for every minute of cache.

Previously, the market often questioned whether running large models was a losing proposition, but the current situation has completely flipped.

Judging by the recent price hikes announced by domestic models last month, the computational power problem is unlikely to be fundamentally solved in the short term, and Anthropic's behavior is bound to spread to AI companies worldwide.

04

The Complete Destruction of Traditional Internet Contracts

If we further broaden our perspective from the developer ecosystem to the macro-ethical level of the entire internet, we will find that Anthropic, a giant that prides itself as an AI moral benchmark, is draining every last bit of surplus value from the internet.

Cloudflare, a company that provides underlying infrastructure services for the global internet, is probably no stranger to netizens worldwide.

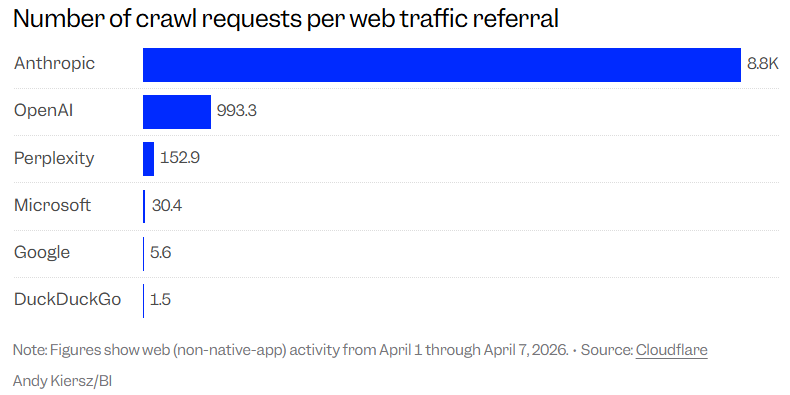

However, a recent set of data released by Cloudflare in early April 2026 ruthlessly exposes the truth of a data extraction led by Anthropic.

In the traditional internet ecosystem, websites need traffic to survive, and traffic (clicks) represents the cost of acquiring information.

But since the advent of AI, much of the information on many websites has lost this value.

Cloudflare tracks the number of times AI crawlers scrape website content and compares it to the traffic referrals these platforms generate for original websites, defining a metric called the 'crawl-to-refer ratio' to measure the impact of AI behavior on websites.

On this list, Anthropic, which always touts 'human interests and responsible AI,' sits firmly at the bottom with a glaring 8800:1 ratio, outpacing its industry competitors.

OpenAI's crawling reflux ratio is 993.3:1, less than one-eighth of Anthropic's.

Simply put, after the crawler created by Anthropic AI crawls internet web pages 8,000 times, it can only bring back 1 click traffic to the original website.

For more than a decade before the emergence of AI, the functioning of the internet ecosystem was based on an unspoken implicit contract:

Creators allowed search engines to crawl and index their original works for free, and in exchange, they would receive real user traffic that could be monetized.

However, the greedy generative AI not only broke this contract but also tried to extract as much value from it as possible.

During the training phase, they chewed up and digested the remaining crystals of human wisdom on the internet, and during the inference phase, they fed knowledge to users in the form of final answers, completely cutting off the path for users to click and trace back to the source.

These extremely high-frequency crawling activities never took into account the risk of server downtime and bandwidth costs for website owners.

The technological revelry led by Anthropic has resulted in the destruction of the ecological environment built on technological hegemony.

However, this highly contradictory and ironic fact will not be resisted in the face of commercial interests; instead, it will be emulated by AI companies worldwide.

Perhaps, before machines become omniscient and omnipotent, human digital civilization may already have become a lifeless wasteland.